[ad_1]

Why are U.S. taxpayers giving billions of dollars to support the likes of the sugar and meat industries?

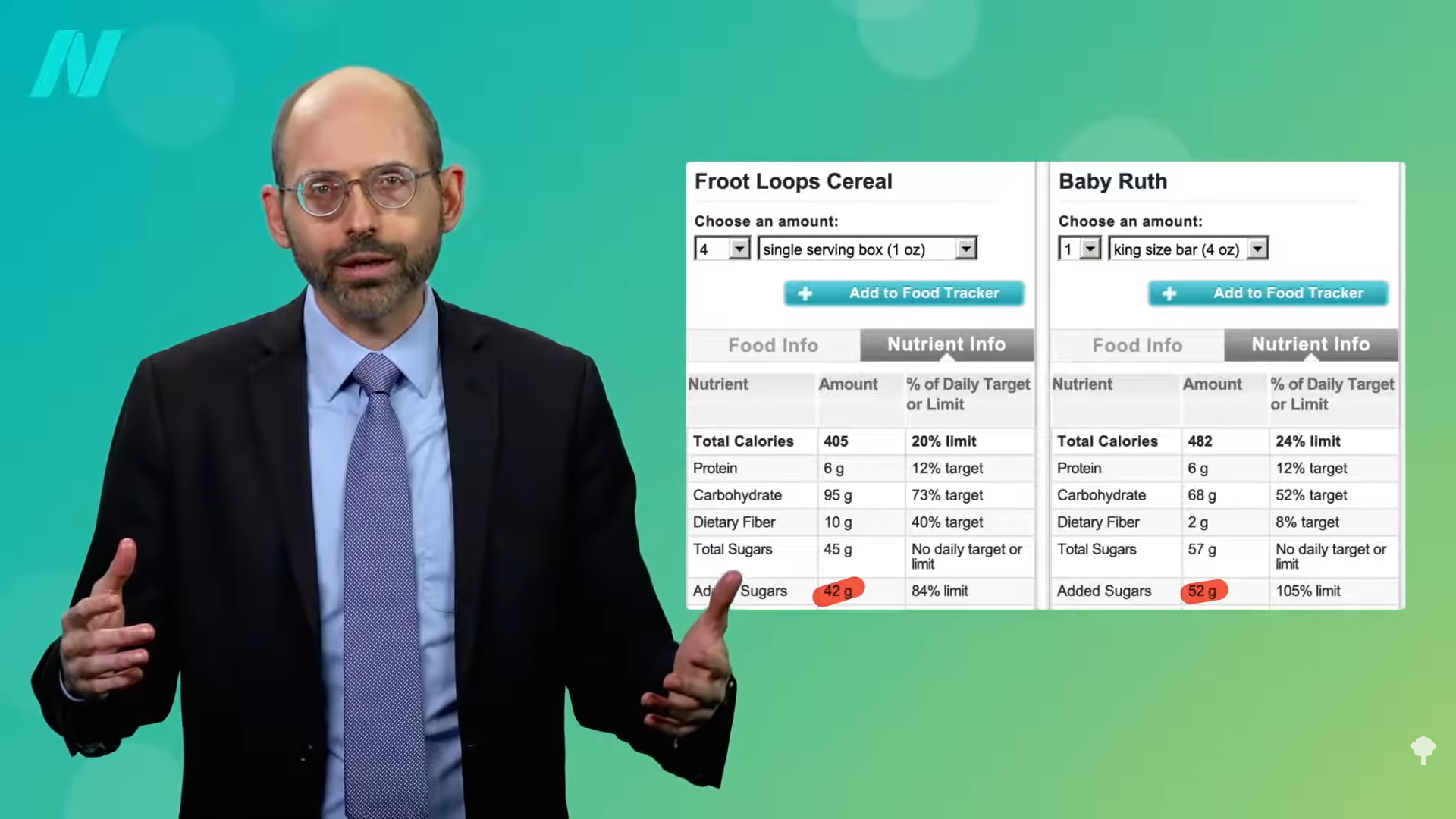

The rise in calorie surplus sufficient to explain the obesity epidemic was less a change in food quantity than in food quality. Access to cheap, high-calorie, low-quality convenience foods exploded, and the federal government very much played a role in making this happen. U.S. taxpayers give billions of dollars in subsidies to prop up the likes of the sugar industry, the corn industry and its high-fructose syrup, and the production of soybeans, about half of which is processed into vegetable oil and the other half is used as cheap feed to help make dollar-menu meat. You can see a table of subsidy recipients below and at 0:49 in my video The Role of Taxpayer Subsidies in the Obesity Epidemic. Why do taxpayers give nearly a quarter of a billion dollars a year to the sorghum industry? When was the last time you sat down to some sorghum? It’s almost all fed to cattle and other livestock. “We have created a food price structure that favors relatively animal source foods, sweets, and fats”—animal products, sugars, and oils.

The Farm Bill started out as an emergency measure during the Great Depression of the 1930s to protect small farmers but was weaponized by Big Ag into a cash cow with pork barrel politics—including said producers of beef and pork. From 1970 to 1994, global beef prices dropped by more than 60 percent. And, if it weren’t for taxpayers “sweetening the pot” with billions of dollars a year, high-fructose corn syrup would cost the soda industry about 12 percent more. Then we hand Big Soda billions more through the Supplemental Nutrition Assistance Program (SNAP), formerly known as the Food Stamps Program, to give sugary drinks to low-income individuals. Why is chicken so cheap? After one Farm Bill, corn and soy were subsidized below the cost of production for cheap animal fodder. We effectively handed the poultry and pork industries about $10 billion each. That’s not chicken feed—or rather, it is!

This is changing what we eat.

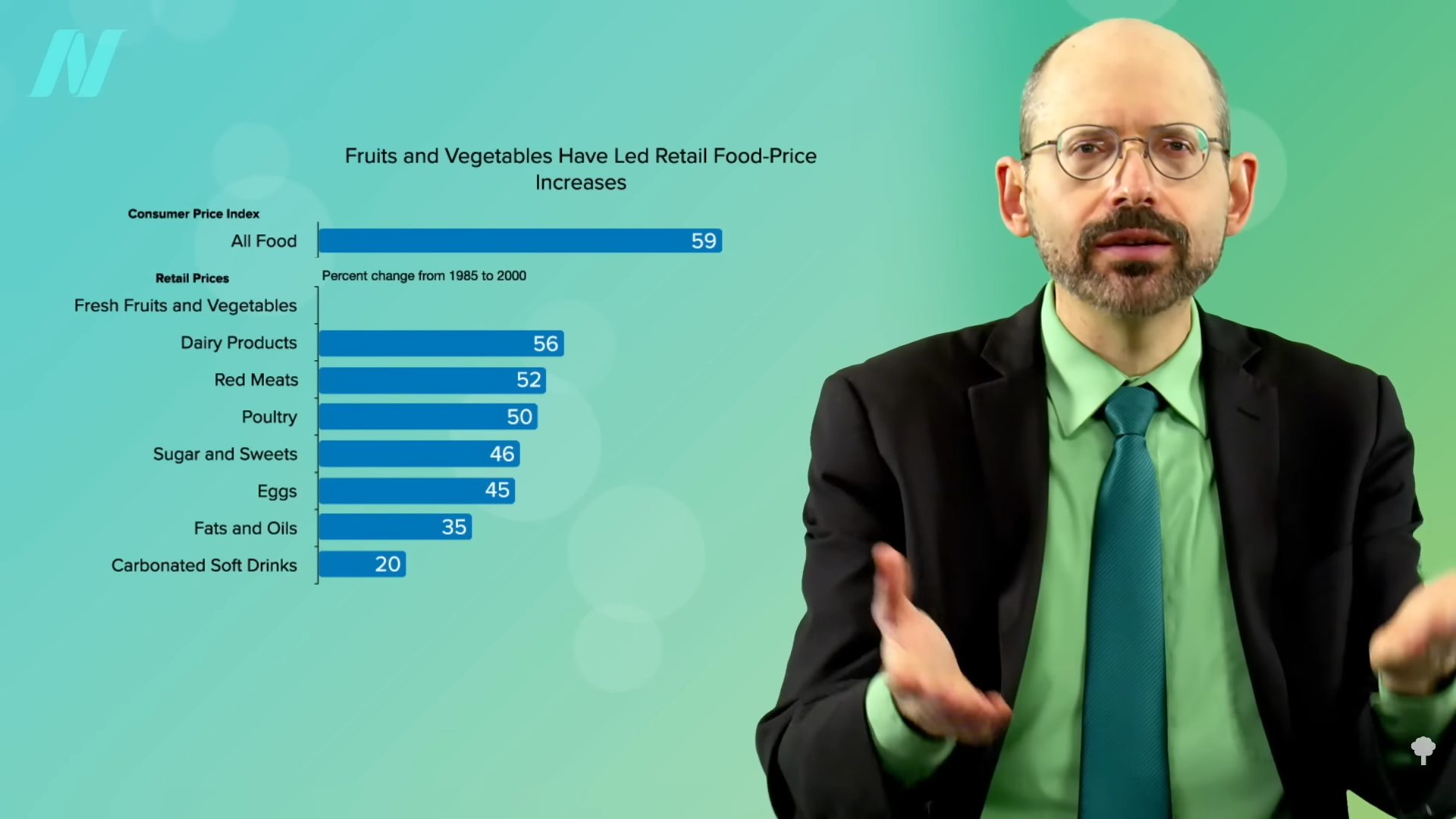

As you can see below and at 2:03 in my video, thanks in part to subsidies, dairy, meats, sweets, eggs, oils, and soda were all getting relatively cheaper compared to the overall consumer food price index as the obesity epidemic took off, whereas the relative cost of fresh fruits and vegetables doubled. This may help explain why, during about the same period, the percentage of Americans getting five servings of fruits and vegetables a day dropped from 42 percent to 26 percent. Why not just subsidize produce instead? Because that’s not where the money is.

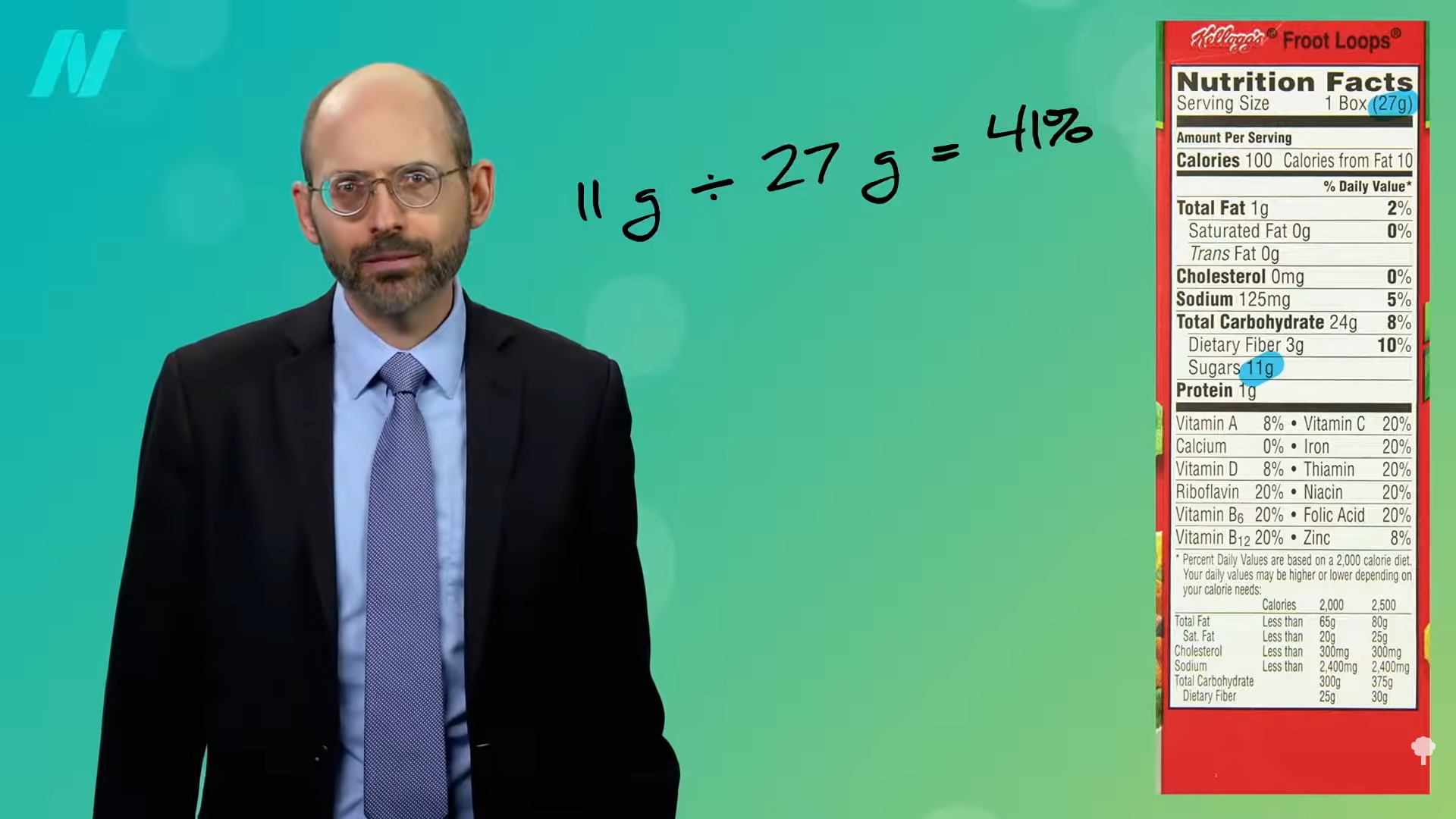

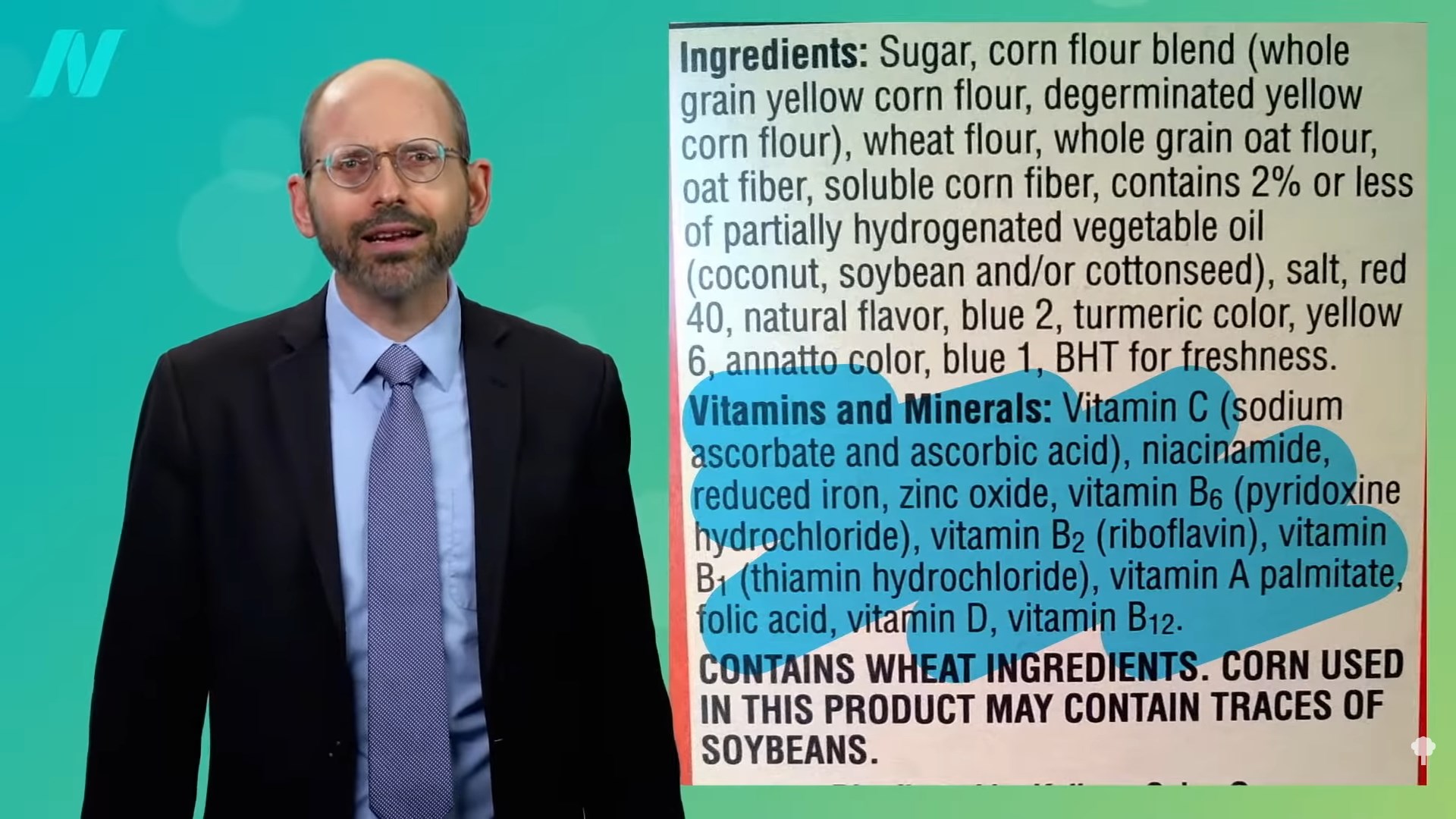

“To understand what is shaping our foodscape today, it is important to understand the significance of differential profit.” Whole foods or minimally processed foods, such as canned beans or tomato paste, are what the food business refers to as “commodities.” They have such slim profit margins that “some are typically sold at or below cost, as ‘loss leaders,’ to attract customers to the store” in the hopes that they’ll also buy the “value-added” products. Some of the most profitable products for producers and vendors alike are the ultra-processed, fatty, sugary, and salty concoctions of artificially flavored, artificially colored, and artificially cheap ingredients—thanks to taxpayer subsidies.

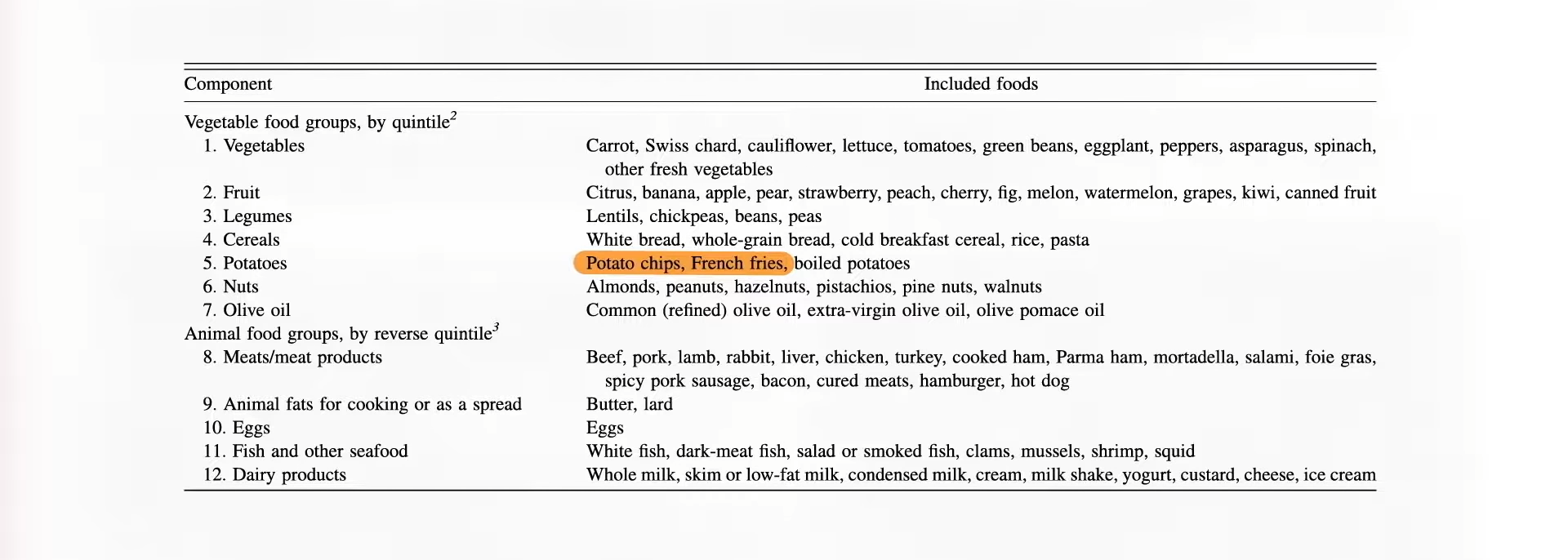

Different foods reap different returns. Measured in “profit per square foot of selling space” in the supermarket, confectionaries like candy bars consistently rank among the most lucrative. The markups are the only healthy thing about them. Fried snacks like potato chips and corn chips are also highly profitable. PepsiCo’s subsidiary Frito-Lay brags that while its products represented only about 1 percent of total supermarket sales, they may account for more than 10 percent of operating profits for supermarkets and 40 percent of profit growth.

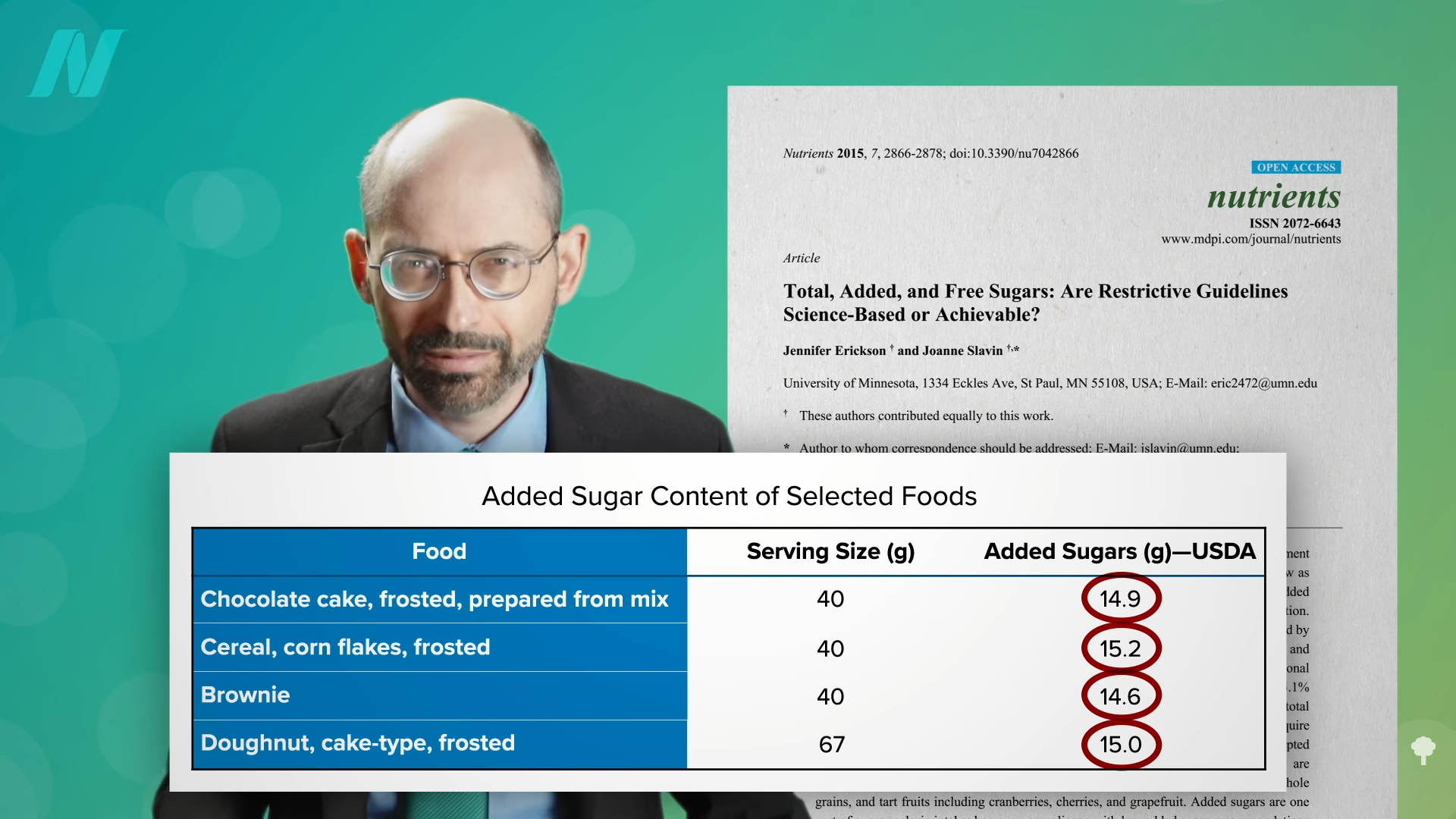

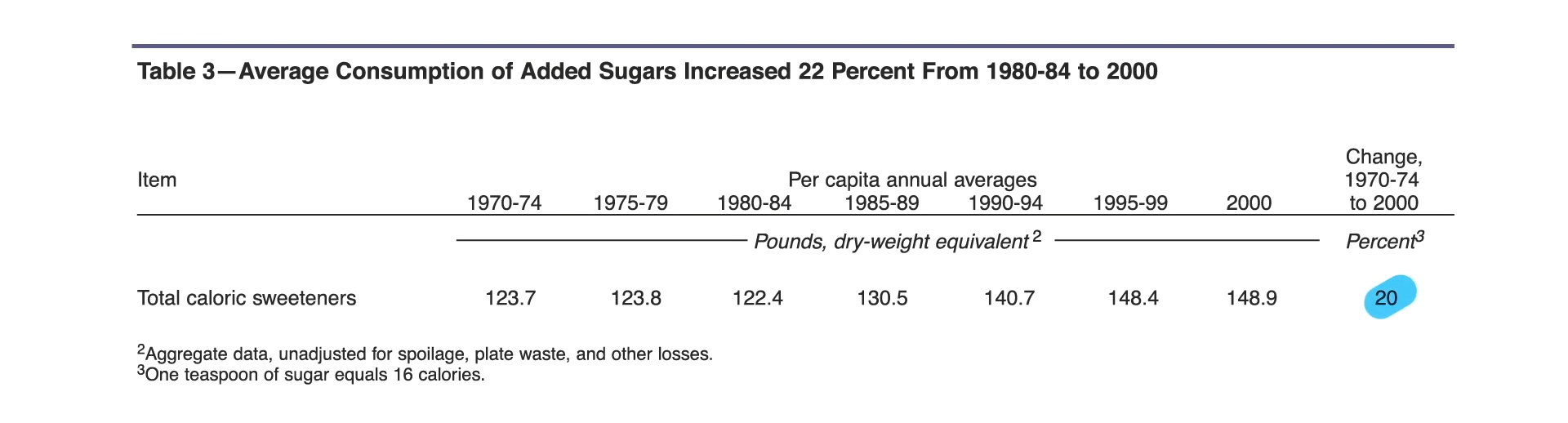

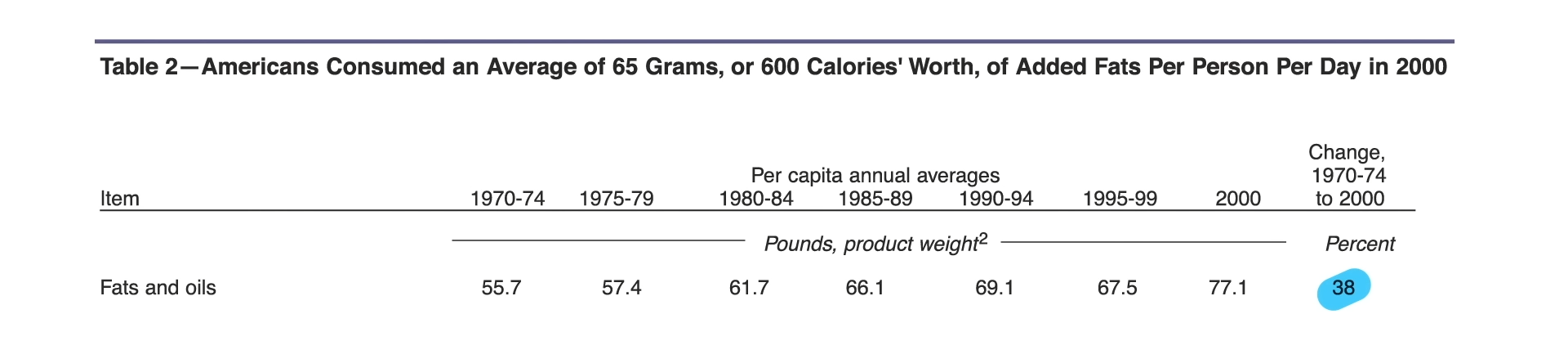

It’s no surprise, then, that the entire system is geared towards garbage. The rise in the calorie supply wasn’t just more food but a different kind of food. There’s a dumb dichotomy about the drivers of the obesity epidemic: Is it the sugar or the fat? They’re both highly subsidized, and they both took off. As you can see below and at 4:29 and 4:35 in my video, along with a significant rise in refined grain products that is difficult to quantify, the rise in obesity was accompanied by about a 20 percent increase in per capita pounds of added sugars and a 38 percent increase in added fats.

More than half of all calories consumed by most adults in the United States were found to originate from these subsidized foods, and they appear to be worse off for it. Those eating the most had significantly higher levels of chronic disease risk factors, including elevated cholesterol, inflammation, and body weight.

If it really were a government of, by, and for the people, we’d be subsidizing healthy foods, if anything, to make fruits and vegetables cheap or even free. Instead, our tax dollars are shoveled to the likes of the sugar industry or to livestock feed to make cheap, fast-food meat.

Speaking of sorghum, I had never had it before and it’s delicious! In fact, I wish I had discovered it before How Not to Diet was published. I now add sorghum and finger millet to my BROL bowl which used to just include purple barley groats, rye groats, oat groats, and black lentils, so the acronym has become an unpronounceable BROLMS. Anyway, sorghum is a great rice substitute for those who saw my rice and arsenic video series and were as convinced as I am that we need to diversify our grains.

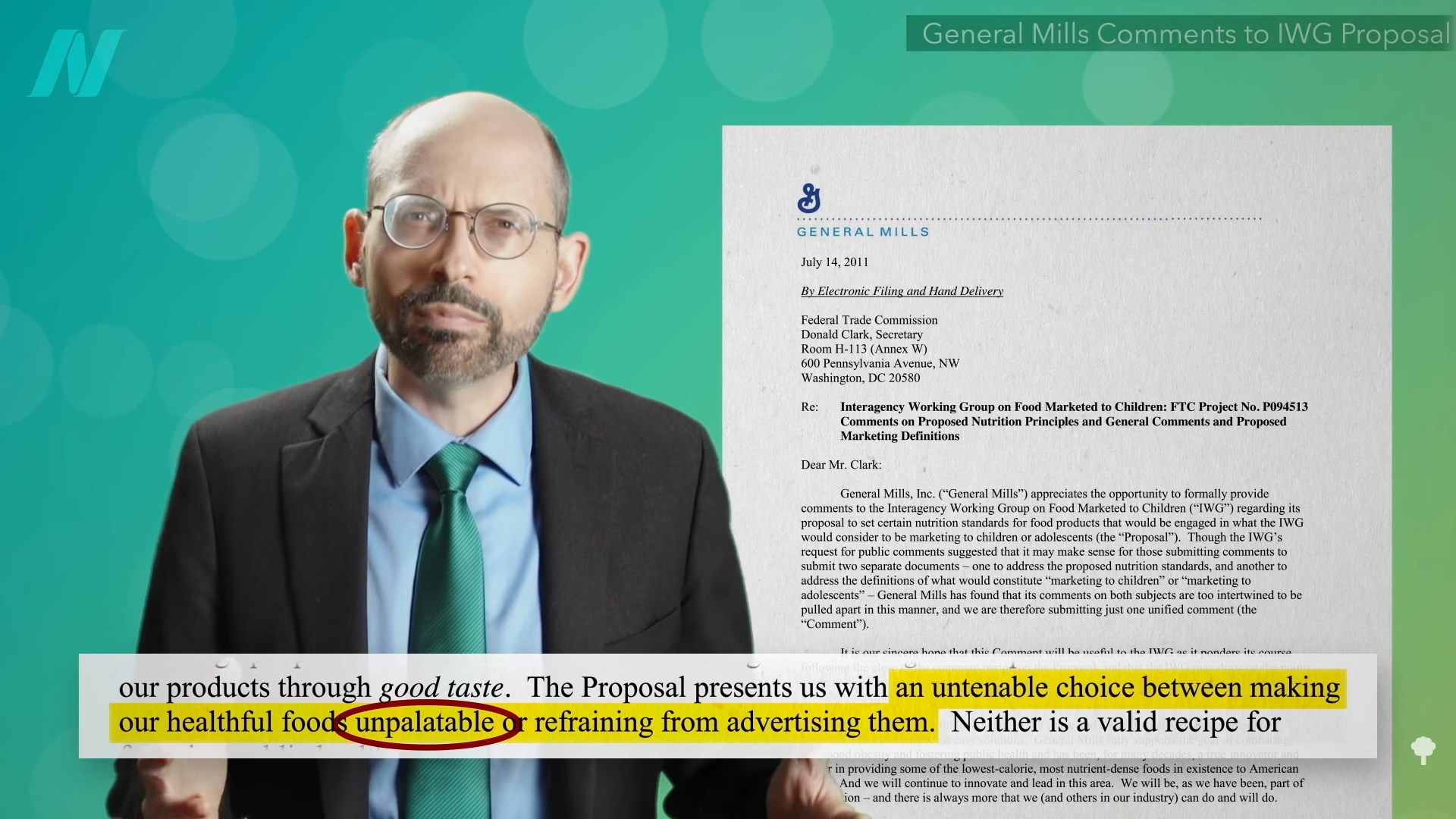

We now turn to marketing. After all of the taxpayer-subsidized glut of calories in the market, the food industry had to find a way to get it into people’s mouths. So, next: The Role of Marketing in the Obesity Epidemic.

We’re about halfway through this series on the obesity epidemic. If you missed any so far, check out the related videos below.

[ad_2]

Michael Greger M.D. FACLM

Source link

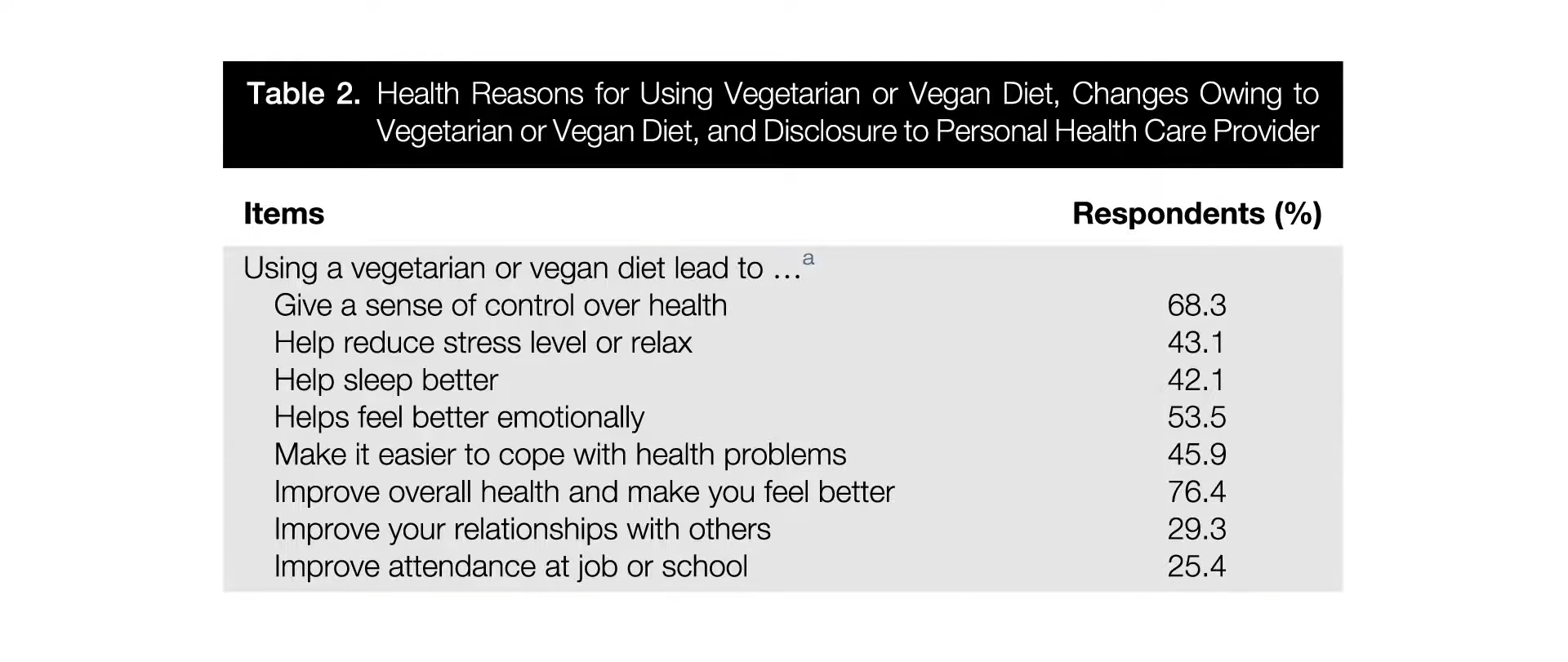

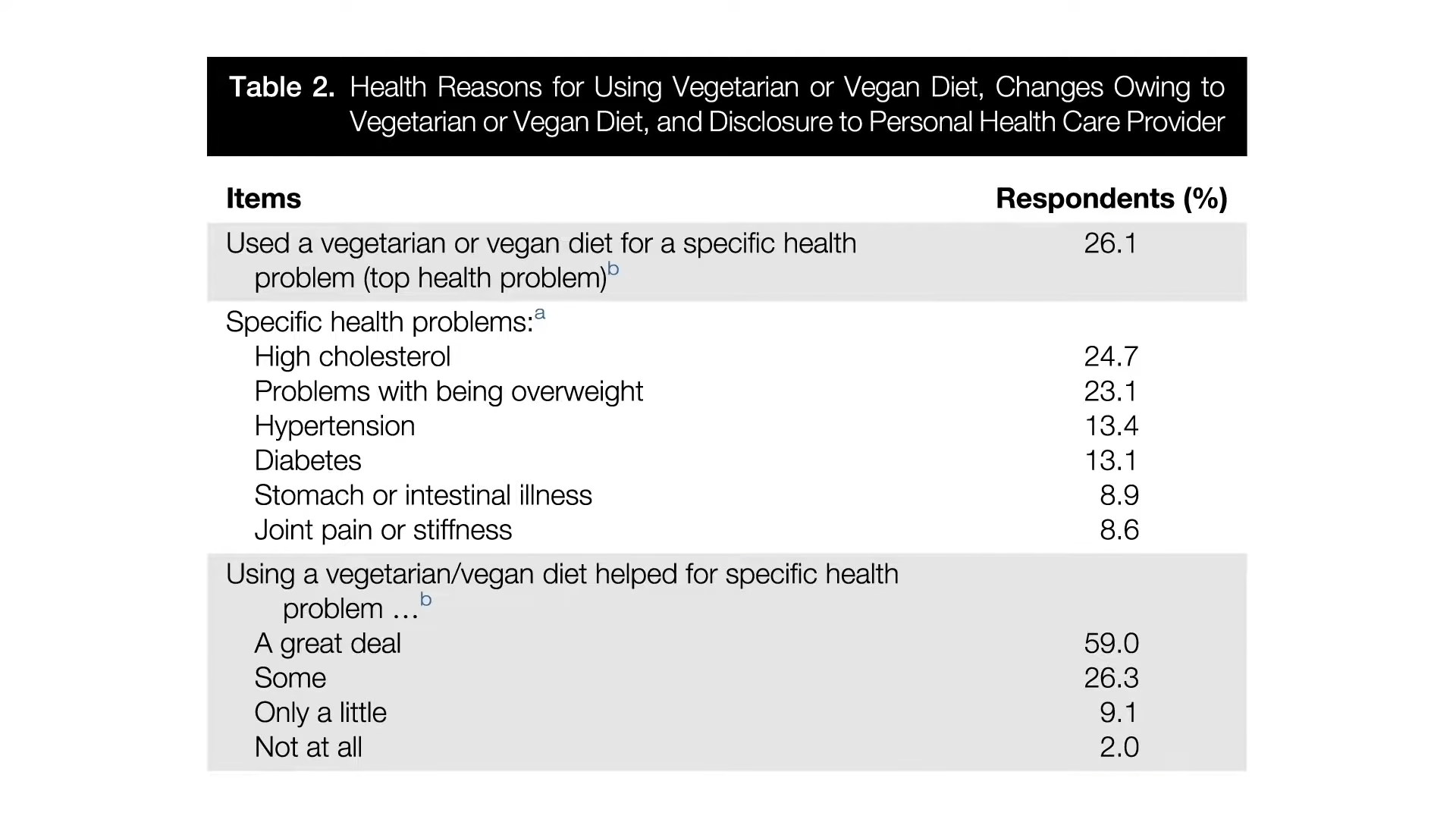

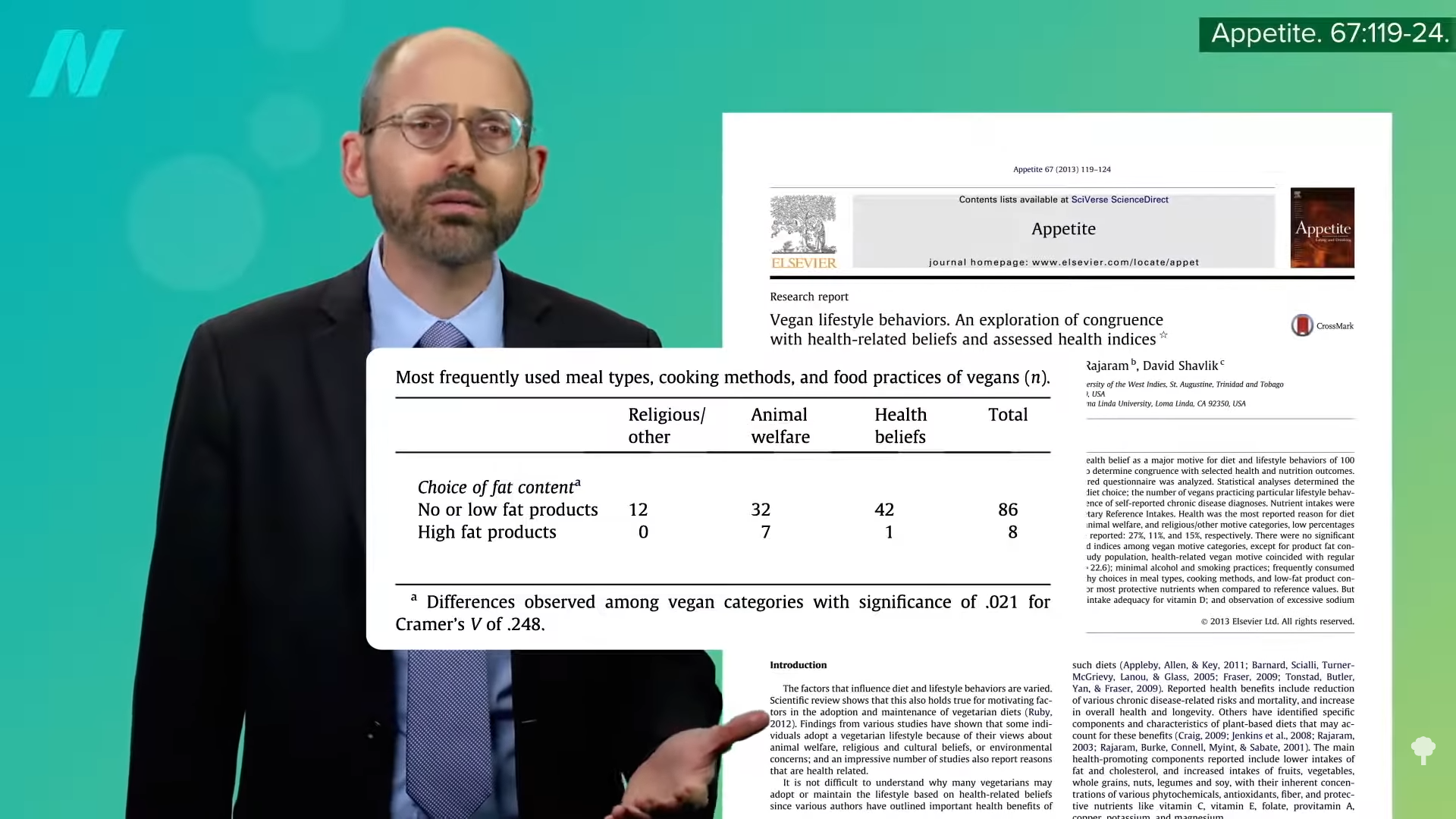

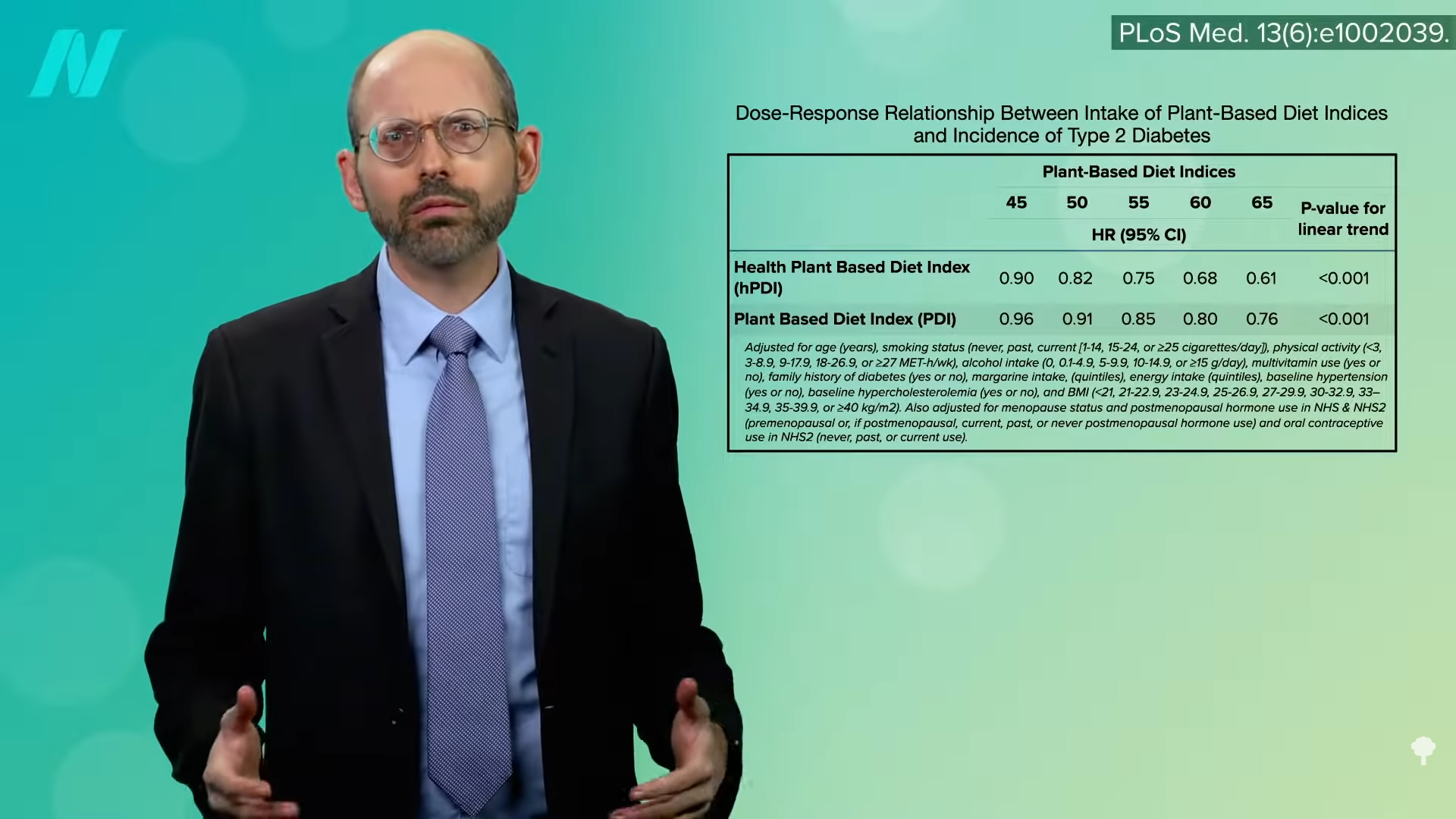

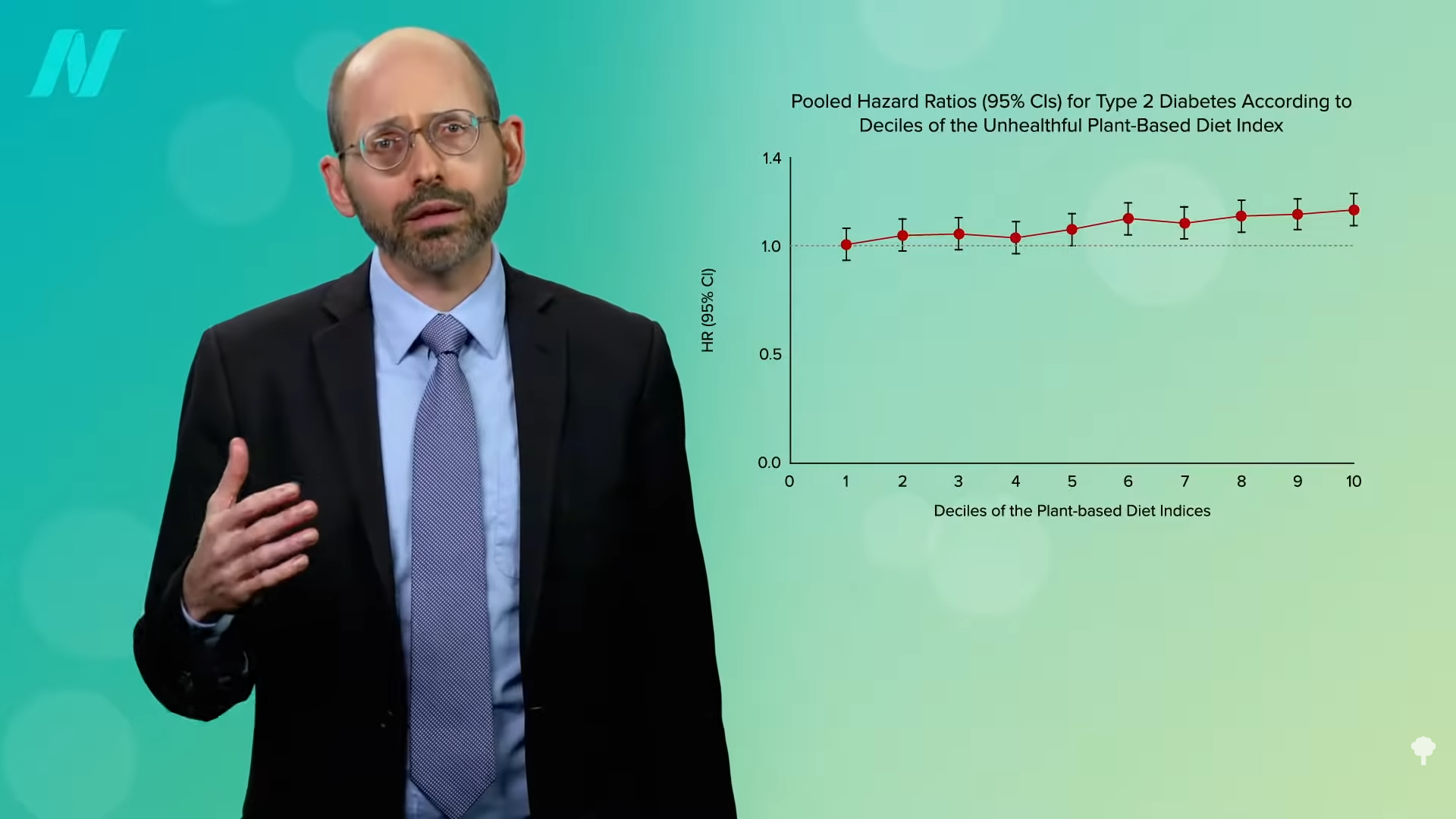

As a physician, labels like vegetarian and vegan just tell me what you don’t eat, but there are a lot of unhealthy vegetarian fare like French fries, potato chips, and soda pop. That’s why I prefer the term whole food and plant-based nutrition. That tells me what you do eat—a diet centered around the healthiest foods out there.

As a physician, labels like vegetarian and vegan just tell me what you don’t eat, but there are a lot of unhealthy vegetarian fare like French fries, potato chips, and soda pop. That’s why I prefer the term whole food and plant-based nutrition. That tells me what you do eat—a diet centered around the healthiest foods out there.