[ad_1]

In our increasingly enshittified online experience, the last bastions of the Internet’s initial egalitarian promise shine like diamonds. These holdout Golden Era vestiges somehow remain useful and unadulterated by corporate greed, while under constant siege for their recalcitrance. The crown jewel of these stalwarts is Wikipedia. Sustained by a legion of volunteer editors and beg-a-thon donations since 2001, the humble open-source encyclopedia is generally regarded as our best effort yet to amass the sum of all human knowledge. Free, citation-filled, and perpetually self-auditing, it’s no wonder so many consider the online encyclopedia to be one of the few wonders of the digital world.

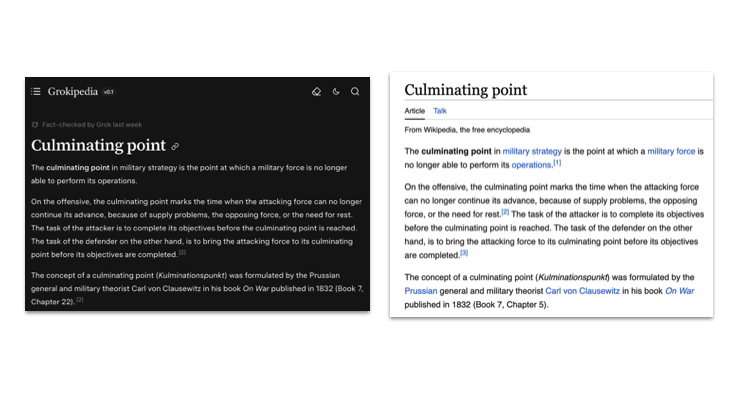

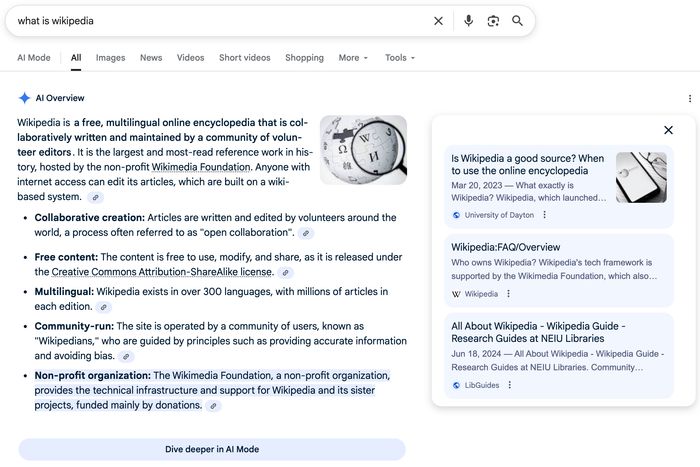

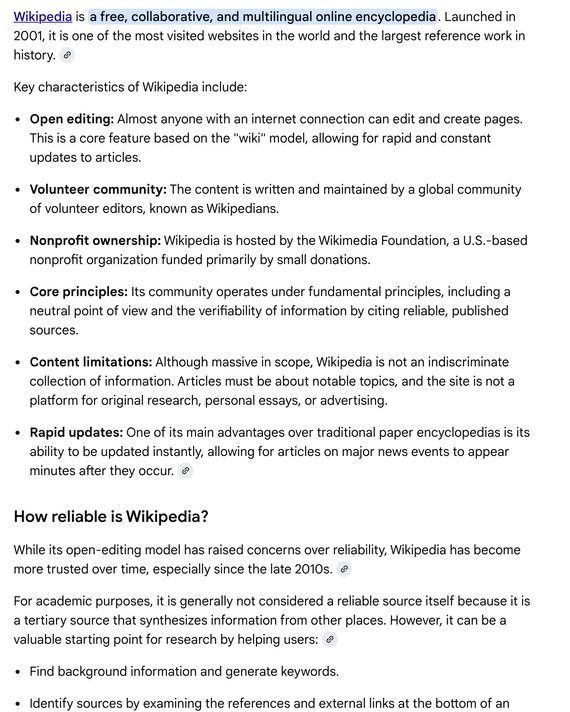

Beyond an incalculable benefit to humans, this font of free information has also made model-training a whole lot easier for AI companies. But once Wikipedia-trained models began spitting out facts that comported with reality’s well-known liberal bias and pierced the industry’s echo chamber bubble, some were displeased. Cognitive dissonance now at the wheel, they declared Wikipedia yet another victim of the “woke mind virus” and set out to build their own Library of Alexandria. Leading the charge in this crusade is Elon Musk, who launched an AI-powered competitor, Grokipedia, last October.

While speaking at India’s AI Impact Summit in New Delhi this week, Wikipedia co-founder and spokesperson Jimmy Wales was asked about the threat the site faced from Grokipedia and its ilk. Unbothered, he dismissed the xAI project as “a cartoon imitation of an encyclopedia.”

Wales went on to champion the humans behind Wikipedia—and the mastery and due diligence they provide—as key ingredients to the site’s success.

“Why do I go to Wikipedia? I go to Wikipedia because it’s human-vetted knowledge,” explained Wales. “We would not consider for a second today letting an AI just write Wikipedia articles because we know how bad they can be.”

Wales described the propensity for AI models to “hallucinate” erroneous, misleading, or tangential information as their primary disqualifying factor. And he’s not wrong. A 2025 OpenAI study showed even their advanced models were still hallucinating at rates as high as 79% in some tests.

As Wales explained, these sorts of errors become even more common and apparent when AI is asked to delve increasingly deeper into a subject—one that may already be niche. Where AI models fail here, their human counterparts shine. Wales touted these subject-matter experts—the “obsessives”—as the best guards against inaccuracies and providers for optimal knowledge-seeking experiences.

“That sort of full, rich human context of understanding is actually quite important in terms of really understanding both what does the reader want and what does the reader need,” said Wales.

If anything, Wales did Grokipedia a kindness by keeping the conversation hallucination-focused. Plenty of journalists and critics have already dug into the many controversies arising from Musk’s white nationalist, navel-gazing facsimile.

Even with Wikipedia still being the universally agreed-upon ark of earthly info, a larger issue remains. We aren’t arguing over a shared reality anymore. With Grokipedia, a distinctly rival one has been created. And the more who use it, the further we get from ever fusing our two worlds back together.

[ad_2]

Justin Caffier

Source link

Image Credit: Slaven Vlasic | Getty Images

Image Credit: Slaven Vlasic | Getty Images