[ad_1]

NEWYou can now listen to Fox News articles!

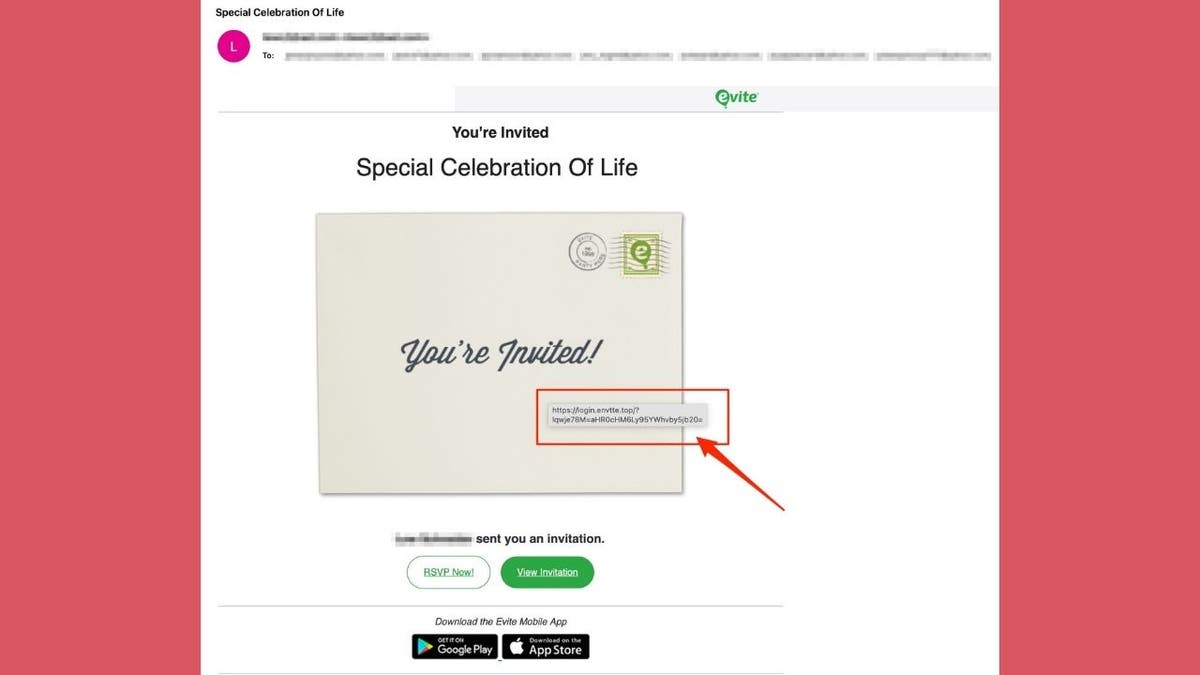

I recently got an email from a friend with the subject “Special Celebration of Life.” It looked like a genuine Evite invitation. But when I clicked the “View Invitation” button, my antivirus software blocked the site, flagging it as a phishing attempt.

It was one of the most convincing scam emails I’ve seen lately, complete with Evite branding, realistic design, and a personal touch. If I didn’t have strong antivirus protection, I might have walked right into it.

Sign up for my FREE CyberGuy Report

Get my best tech tips, urgent security alerts, and exclusive deals delivered straight to your inbox. Plus, you’ll get instant access to my Ultimate Scam Survival Guide – free when you join my CYBERGUY.COM/NEWSLETTER

DON’T FALL FOR THIS BANK PHISHING SCAM TRICK

Phishing email appears to be a legitimate Evite invitation titled “Special Celebration Of Life.” (Kurt “CyberGuy” Knutsson)

How this Evite phishing scam works

Scammers send fake Evite messages with emotionally charged subjects, such as a “Special Celebration of Life,” to lure you into clicking. These emails mimic Evite’s design so they appear to come from someone you know, lowering your guard.

Scammers are sending fake Evite invitations that look personal and trustworthy. One click can expose a user’s personal data or install malware. (Kurt “CyberGuy” Knutsson)

Clicking the malicious link can:

- Steal your personal information

- Capture your login credentials

- Install malware on your device

Because these invitations feel personal and urgent, they can bypass skepticism. Always verify sender details before opening event links, especially for sensitive occasions.

Always hover over links and check sender details before clicking, especially on invitations or urgent messages from unfamiliar sources. (Kurt “CyberGuy” Knutsson)

Steps to protect yourself from fake Evite phishing scams

Even the most convincing invitation can be a trap, as the fake Evite email I received proved. By following these steps, you can lower your chances of falling for similar scams and keep your personal information safe.

HOW FAKE MICROSOFT ALERTS TRICK YOU INTO PHISHING SCAMS

1) Use strong antivirus software for real-time protection

Strong antivirus software can stop you from landing on dangerous sites. In my case, the antivirus software blocked the fake Evite link and flagged it as phishing before any damage was done. Choose strong antivirus software with phishing detection and automatic blocking to protect against threats you might not spot yourself.

The best way to safeguard yourself from malicious links that install malware, potentially accessing your private information, is to have strong antivirus software installed on all your devices. This protection can also alert you to phishing emails and ransomware scams, keeping your personal information and digital assets safe.

Get my picks for the best 2025 antivirus protection winners for your Windows, Mac, Android & iOS devices at CyberGuy.com/LockUpYourTech

2) Check the sender’s email address carefully

Scammers often use email addresses that look almost identical to legitimate ones, but with tiny changes, like an extra letter, a missing character, or a different domain extension. In my fake Evite example, the branding looked perfect, but the sender’s address didn’t match Evite’s official domain. Always double-check before trusting an email.

HOW I ALMOST FELL FOR A MICROSOFT 365 CALENDAR INVITE SCAM

3) Hover over links before clicking

Before you click “You’re Invited!”, “View Invitation” or “RSVP Now,” hover your mouse over the link. Your email client will usually display the destination URL. In the phishing email I received, the link pointed to a suspicious domain, not Evite.com. In the phishing email I received, the link pointed to a suspicious domain, not Evite.com. If you look closely, you’ll see it was misspelled as “envtte.” If the address looks odd or unfamiliar, don’t click.

A closer look reveals the fake link in this email that leads to a suspicious domain, not Evite.com. (Kurt “CyberGuy” Knutsson)

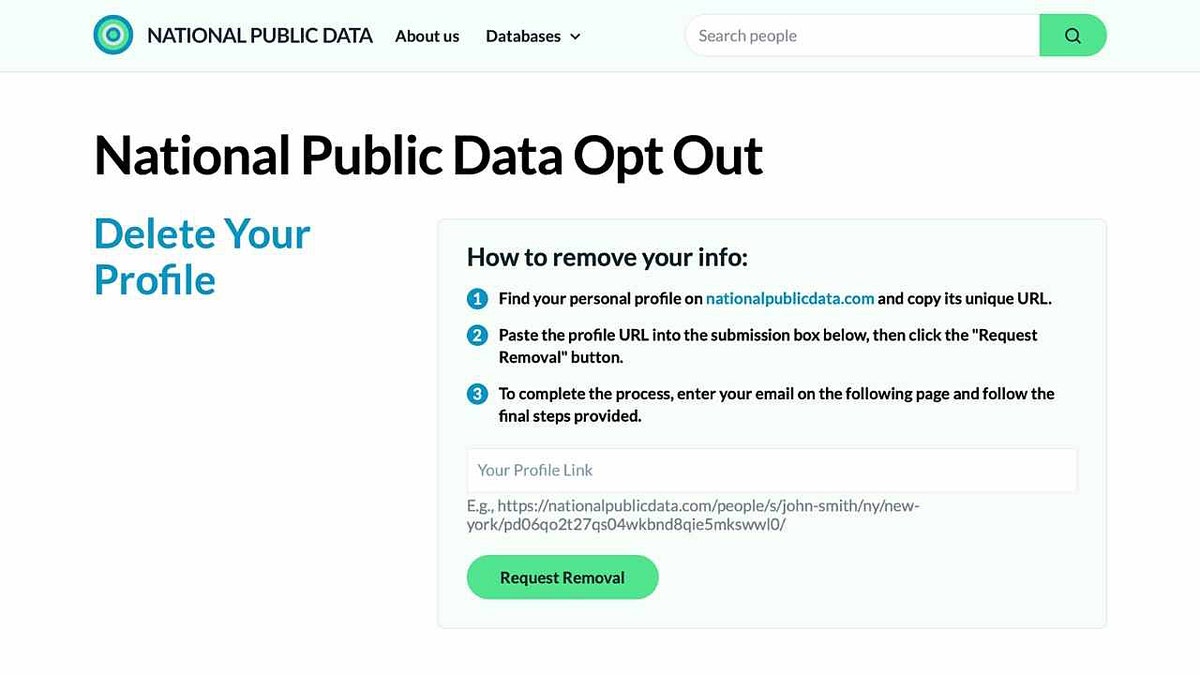

4) Use a personal data removal service to limit your exposure

The less personal information scammers can find about you online, the harder it is for them to target you. A personal data removal service can scrub your personal details, such as your phone number, home address, and email, from public databases. This reduces the risk of scammers crafting convincing, personalized phishing attempts like the fake Evite email I received.

Check out my top picks for data removal services and get a free scan to find out if your personal information is already out on the web by visiting Cyberguy.com/Delete

Get a free scan to find out if your personal information is already out on the web: Cyberguy.com/FreeScan

SOCIAL SECURITY ADMINISTRATION PHISHING SCAM TARGETS RETIREES

5) Verify with the sender directly before clicking

If an invitation appears to come from a friend, don’t assume it’s real. Scammers often spoof the names of people you know. Send a quick text or make a phone call to confirm they actually sent the invite. In many cases, they’ll be just as surprised as you are to hear about it.

What this means for you

Phishing scams are evolving to look more authentic than ever. Even if the message seems to come from someone you trust, one careless click can put your personal data at risk. Having strong cybersecurity tools in place and knowing how to spot a scam is your best defense.

CLICK HERE TO GET THE FOX NEWS APP

Kurt’s key takeaways

I was lucky my antivirus software blocked this attack before any damage was done. But not everyone has that safety net. The next time an unexpected invitation or urgent message lands in your inbox, take a few extra seconds to verify before you click.

Have you ever almost fallen for a fake event invite? What happened? Let us know by writing to us at Cyberguy.com/Contact

Sign up for my FREE CyberGuy Report

Get my best tech tips, urgent security alerts, and exclusive deals delivered straight to your inbox. Plus, you’ll get instant access to my Ultimate Scam Survival Guide – free when you join my CYBERGUY.COM/NEWSLETTER

Copyright 2025 CyberGuy.com. All rights reserved.

[ad_2]