Leading tech companies are in a race to release and improve artificial intelligence products, leaving U.S. users to puzzle out how much of their personal data could be extracted to train AI tools.

Meta (which owns Facebook, Instagram, Threads and WhatsApp), Google and LinkedIn all have rolled out AI app features that have the capacity to draw on users’ public profiles or emails. Google and LinkedIn offer users ways to opt out of the AI features, while Meta’s AI tool provides no means for its users to say no thanks.

“Gmail just flipped a dangerous switch on October 10, 2025 and 99% of Gmail users have no idea,” a Nov. 8 Instagram post said.

Posts warned the platforms’ AI tool rollouts make most private information available for tech company harvesting. “Every conversation, every photo, every voice message, fed into AI and used for profit,” a Nov. 9 X video about Meta said.

Technology companies are rarely fully transparent when it comes to the user data they collect and what they use it for, Krystyna Sikora, a research analyst for the Alliance for Securing Democracy at the German Marshall Fund, told PolitiFact.

“Unsurprisingly, this lack of transparency can create significant confusion that in turn can lead to fear mongering and the spread of false information about what is and is not permissible,” Sikora said.

The best — if tedious — way for people to know and protect their privacy rights is to read the terms and conditions, since it often explicitly outlines how the data will be used and whether it will be shared with third parties, Sikora said. The U.S. doesn’t have any comprehensive federal laws on data privacy for technology companies.

Here’s what we learned about how each platform’s AI is handling your data:

Meta

Social media claim: “Starting December 16th Meta will start reading your DMs, every conversation, every photo, every voice message fed into AI and used for profit.” — Nov. 9 X post with 1.6 million views as of Nov. 19.

The facts: Meta announced a new policy to take effect Dec. 16, but that policy alone does not result in your direct messages, photos and voice messages being fed into its AI tool. The policy involves how Meta will customize users’ content and advertisements based on how they interact with Meta AI.

For example, if a user interacts with Meta’s AI chatbot about hiking, Meta might start showing that person recommendations for hiking groups or hiking boots.

But that doesn’t mean your data isn’t being used for AI purposes. Although Meta doesn’t use people’s private messages in Instagram, WhatsApp or Messenger to train its AI, it does collect user content that is set to “public” mode. This can include photos, posts, comments and reels. If the user’s Meta AI conversations involve religious views, sexual orientation and racial or ethnic origin, Meta says the system is designed to avoid parlaying these interactions into ads. If users ask questions of Meta AI using its voice feature, Meta says the AI tool will use the microphone only when users give permission.

There is a caveat: The tech company says its AI might use information about people who don’t have Meta product accounts if their information appears in other users’ public posts. For example, if a Meta user mentions a non-user in a public image caption, that photo and caption could be used to train Meta AI.

Can you opt-out? No. If you are using Meta platforms in these ways — making some of your posts public and using the chatbot — your data could be used by Meta AI. There is no way to deactivate Meta AI in Instagram, Facebook or Threads. WhatsApp users can deactivate the option to talk with Meta AI in their chats, but this option is available only per chat, meaning that you must deactivate the option in each chat’s advanced privacy settings.

The X post inaccurately advised people to submit this form to opt-out. But the form is simply a way for users to report when Meta’s AI supplies an answer that contains someone’s personal information.

David Evan Harris, who teaches AI ethics at University of California, Berkeley, told PolitiFact that because the U.S. has no federal regulations about privacy and AI training, people have no standardized legal right to opt out of AI training in the way that people in countries such as Switzerland, the United Kingdom and South Korea do.

Even when social media platforms provide opt out options for U.S. customers, it’s often difficult to find the settings to do so, Harris said.

Deleting your Meta accounts does not eliminate the possibility of Meta AI using your past public data, Meta’s spokesperson said.

Google

Social media claim: “Did you know Google just gave its AI access to read every email in your Gmail — even your attachments?” — Nov. 8 Instagram post with more than 146,000 likes as of Nov. 19.

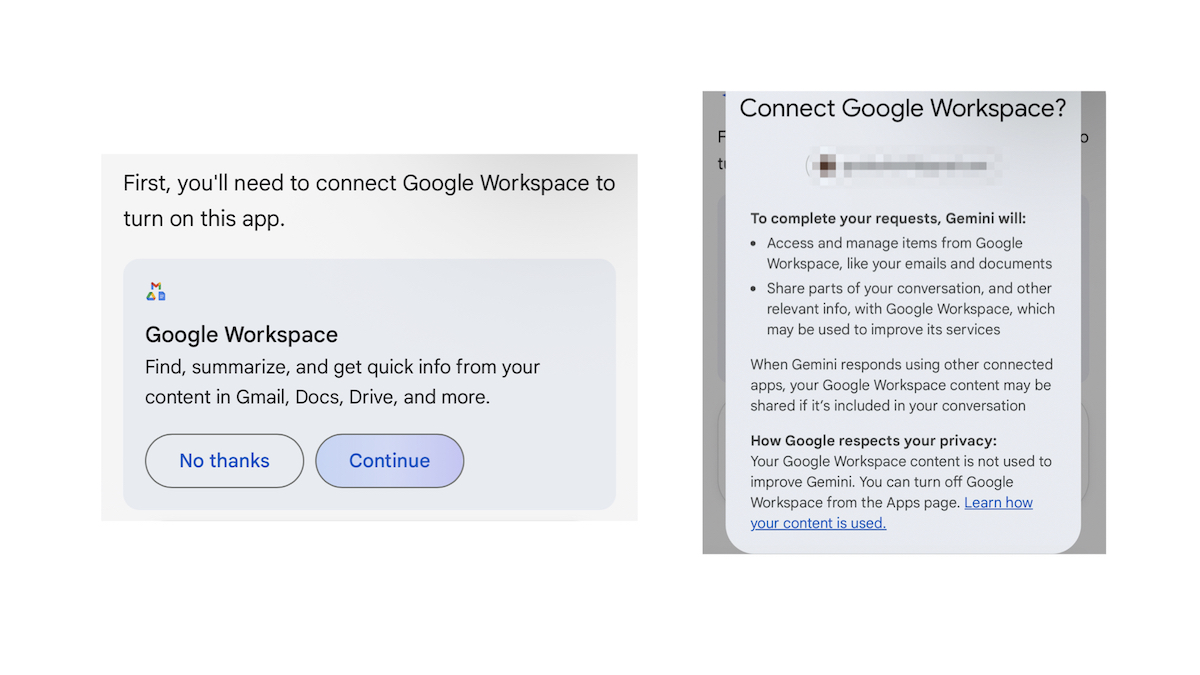

The facts: Google has a host of products that interact with private data in different ways. Google announced Nov. 5 that its AI product, Gemini Deep Research, can connect to users’ other Google products, including Gmail, Drive and Chat. But, as Forbes reported, users must first give permission to employ the tool.

Users who want to allow Gemini Deep Research to have access to private information across products can choose what data sources to employ, including Google search, Gmail, Drive and Google Chat.

There are other ways Google collects people’s data:

-

Through searches and prompts in Gemini apps, including its mobile app, Gemini in Chrome or Gemini in another web browser

-

Any video or photo uploads the user entered into Gemini

-

Through interactions with apps such as YouTube and Spotify, if users give permission

-

Through message and phone calls apps, including call logs and messages logs, if users give permission

A Google spokesperson told PolitiFact the company doesn’t use this information to train AI when registered users are under age 13.

Google can also access people’s data when they have smart features activated in their Gmail and Google Workplace settings (that are automatically on in the U.S.), which give Google consent to draw on email content and user activity data to help users compose emails or suggest Google Calendar events. With optional paid subscriptions, users can access additional AI features, including in-app Gemini summaries.

Turning off Gmail’s smart features can stop Google’s AI from accessing Gmail, but it doesn’t stop Google’s access on the Gemini app, which users can either download or access in a browser.

(Screenshot shows a permission pop-up that appeared in the Gemini app after a PolitiFact reporter asked Gemini to summarize an email. Gemini asked permission to access that email.)

A California lawsuit accuses Gemini of spying on users’ private communications. The lawsuit says an October policy change gives Gemini default access to private content such as emails and attachments in people’s Gmail, Chat and Meet. Before October, users had to manually allow Gemini to access the private content, now users must go into their privacy settings to disable it. The lawsuit claims the Google policy update violates California’s 1967 Invasion of Privacy Act, a law that prohibits unauthorized wiretapping and recording confidential communications without consent.

Can you opt-out? If people don’t want their conversations used to train Google AI, they can use “temporary” chats or chat without signing into their Gemini accounts. Doing that means Gemini can’t save a person’s chat history, a Google spokesperson said. Otherwise, opting out of having Google’s AI in Gmail, Drive and Meet requires turning off smart features in settings.

LinkedIn

Social media claim: Starting Nov. 3, “LinkedIn will begin using your data to train AI.” — Nov. 2 Instagram post with more than 18,000 likes as of Nov. 19.

The facts: LinkedIn, owned by Microsoft, announced on its website that starting Nov. 3, it will use some U.S. members’ data to train content-generating AI models.

The data the AI collects includes details from people’s profiles and public content users post.

The training does not draw on information from people’s private messages, LinkedIn said.

LinkedIn also said, aside from the AI data access, Microsoft started receiving information about LinkedIn members — such as profile information, feed activity and ad engagement — as of Nov. 3 in order to target users with personalized ads.

Can you opt-out? Yes. Autumn Cobb, a LinkedIn spokesperson, confirmed to PolitiFact that members can opt out if they don’t want their content used for AI training purposes. They can also opt out of receiving targeted, personalized ads.

To remove your data from being used for training purposes, go to data privacy, click on the option that says “Data for Generative AI Improvement” and then turn off the feature that says “use my data for training content creation AI models.”

And to opt out of personalized ads, go to advertising data in settings, and turn off ads off LinkedIn and the option that says “data sharing with our affiliates and select partners.”