Washington

CNN

—

Coming out of a three-hour Senate hearing on artificial intelligence, Elon Musk, the head of a handful of tech companies, summarized the grave risks of AI.

“There’s some chance – above zero – that AI will kill us all. I think it’s low but there’s some chance,” Musk told reporters. “The consequences of getting AI wrong are severe.”

But he also said the meeting “may go down in history as being very important for the future of civilization.”

The session organized by Senate Majority Leader Chuck Schumer brought high-profile tech CEOs, civil society leaders and more than 60 senators together. The first of nine sessions aims to develop consensus as the Senate prepares to draft legislation to regulate the fast-moving artificial intelligence industry. The group included CEOs of Meta, Google, OpenAI, Nvidia and IBM.

All the attendees raised their hands — indicating “yes” — when asked whether the federal government should oversee AI, Schumer told reporters Wednesday afternoon. But consensus on what that role should be and specifics on legislation remained elusive, according to attendees.

Benefits and risks

Bill Gates spoke of AI’s potential to feed the hungry and one unnamed attendee called for spending tens of billions on “transformational innovation” that could unlock AI’s benefits, Schumer said.

The challenge for Congress is to promote those benefits while mitigating the societal risks of AI, which include the potential for technology-based discrimination, threats to national security and even, as X owner Musk said, “civilizational risk.”

“You want to be able to maximize the benefits and minimize the harm,” said Schumer, who organized the first of nine sessions. “And that will be our difficult job.”

Senators emerging from the meeting said they heard a broad range of perspectives, with representatives from labor unions raising the issue of job displacement and civil rights leaders highlighting the need for an inclusive legislative process that provides the least powerful in society a voice.

Most agreed that AI could not be left to its own devices, said Washington Democratic Sen. Maria Cantwell.

“I thought Satya Nadella from Microsoft said it best: ‘When it comes to AI, we shouldn’t be thinking about autopilot. You need to have copilots.’ So who’s going to be watching this activity and making sure that it’s done correctly?”

Other areas of agreement reflected traditional tech industry priorities, such as increasing federal investment in research and development as well as promoting skilled immigration and education, Cantwell added.

But there was a noticeable lack of engagement on some of the harder questions, she said, particularly on whether a new federal agency is needed to regulate AI.

“There was no discussion of that,” she said, though several in the meeting raised the possibility of assigning some greater oversight responsibilities to the National Institute of Standards and Technology, a Commerce Department agency.

Musk told journalists after the event that he thinks a standalone agency to regulate AI is likely at some point.

“With AI we can’t be like ostriches sticking our heads in the sand,” Schumer said, according to prepared remarks acquired by CNN. He also noted this is “a conversation never before seen in Congress.”

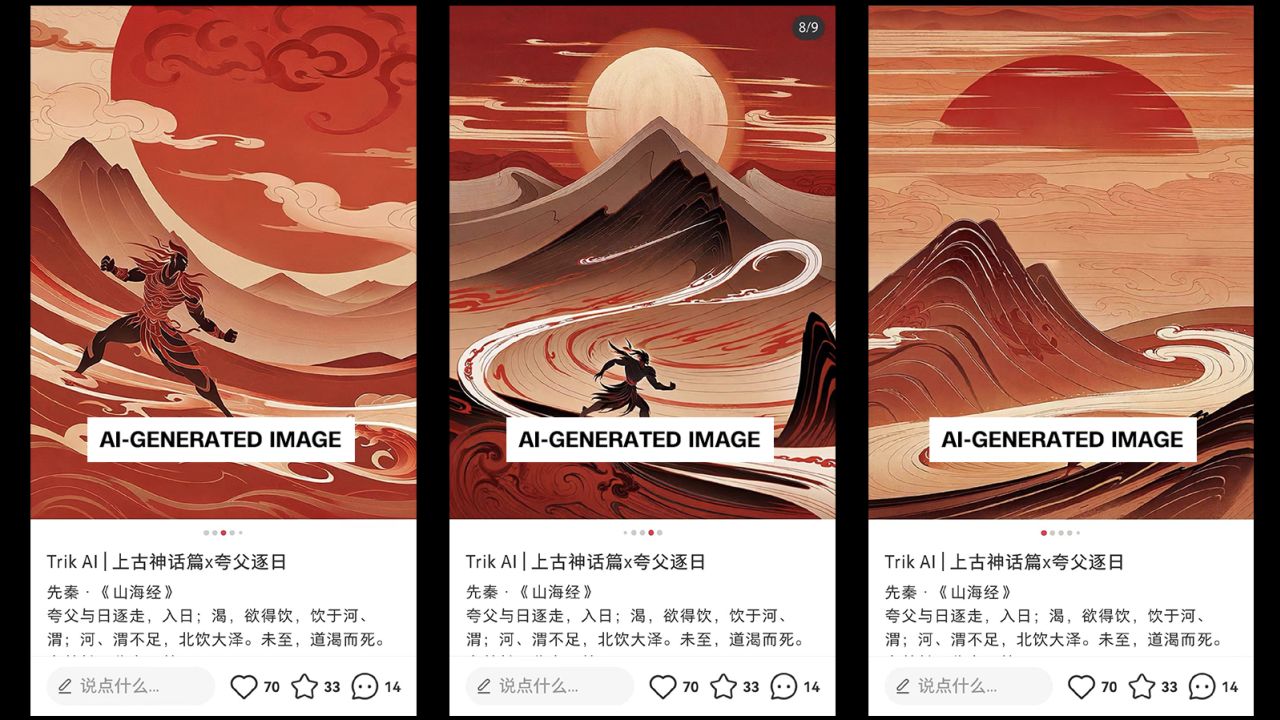

The push reflects policymakers’ growing awareness of how artificial intelligence, and particularly the type of generative AI popularized by tools such as ChatGPT, could potentially disrupt business and everyday life in numerous ways — ranging from increasing commercial productivity to threatening jobs, national security and intellectual property.

The high-profile guests trickled in shortly before 10 a.m., with Meta CEO Mark Zuckerberg pausing to chat with Nvidia CEO Jensen Huang outside the Senate Russell office building’s Kennedy Caucus Room. Google CEO Sundar Pichai was seen huddling with Delaware Democratic Sen. Chris Coons, while X owner Musk quickly swept by a mass of cameras with a quick wave to the crowd. Inside, Musk was seated at the opposite end of the room from Zuckerberg, in what is likely the first time that the two men have shared a room since they began challenging each other to a cage fight months ago.

The session at the US Capitol in Washington also gave the tech industry its most significant opportunity yet to influence how lawmakers design the rules that could govern AI.

Some companies, including Google, IBM, Microsoft and OpenAI, have already offered their own in-depth proposals in white papers and blog posts that describe layers of oversight, testing and transparency.

IBM’s CEO, Arvind Krishna, argued in the meeting that US policy should regulate risky uses of AI, as opposed to just the algorithms themselves.

“Regulation must account for the context in which AI is deployed,” he said, according to his prepared remarks.

Executives such as OpenAI CEO Sam Altman previously wowed some senators by publicly calling for new rules early in the industry’s lifecycle, which some lawmakers see as a welcome contrast to the social media industry that has resisted regulation.

Clement Delangue, co-founder and CEO of the AI company Hugging Face, tweeted last month that Schumer’s guest list “might not be the most representative and inclusive,” but that he would try “to share insights from a broad range of community members, especially on topics of openness, transparency, inclusiveness and distribution of power.”

Civil society groups have voiced concerns about AI’s possible dangers, such as the risk that poorly trained algorithms may inadvertently discriminate against minorities, or that they could ingest the copyrighted works of writers and artists without compensation or permission. Some authors have sued OpenAI over those claims, while others have asked in an open letter to be paid by AI companies.

News publishers such as CNN, The New York Times and Disney are some of the content producers who have blocked ChatGPT from using their content. (OpenAI has said exemptions such as fair use apply to its training of large language models.)

“We will push hard to make sure it’s a truly democratic process with full voice and transparency and accountability and balance,” said Maya Wiley, president and CEO of the Leadership Conference on Civil and Human Rights, “and that we get to something that actually supports democracy; supports economic mobility; supports education; and innovates in all the best ways and ensures that this protects consumers and people at the front end — and just not try to fix it after they’ve been harmed.”

The concerns reflect what Wiley described as “a fundamental disagreement” with tech companies over how social media platforms handle misinformation, disinformation and speech that is either hateful or incites violence.

American Federation of Teachers President Randi Weingarten said America can’t make the same mistake with AI that it did with social media. “We failed to act after social media’s damaging impact on kids’ mental health became clear,” she said in a statement. “AI needs to supplement, not supplant, educators, and special care must be taken to prevent harm to students.”

Navigating those diverse interests will be Schumer, who along with three other senators — South Dakota Republican Sen. Mike Rounds, New Mexico Democratic Sen. Martin Heinrich and Indiana Republican Sen. Todd Young — is leading the Senate’s approach to AI. Earlier this summer, Schumer held three informational sessions for senators to get up to speed on the technology, including one classified briefing featuring presentations by US national security officials.

Wednesday’s meeting with tech executives and nonprofits marked the next stage of lawmakers’ education on the issue before they get to work developing policy proposals. In announcing the series in June, Schumer emphasized the need for a careful, deliberate approach and acknowledged that “in many ways, we’re starting from scratch.”

“AI is unlike anything Congress has dealt with before,” he said, noting the topic is different from labor, healthcare or defense. “Experts aren’t even sure which questions policymakers should be asking.”

Rounds said hammering out the specific scope of regulations will fall to Senate committees. Schumer added that the goal — after hosting more sessions — is to craft legislation over “months, not years.”

“We’re not ready to write the regs today. We’re not there,” Rounds said. “That’s what this is all about.”

A smattering of AI bills have already emerged on Capitol Hill and seek to rein in the industry in various ways, but Schumer’s push represents a higher-level effort to coordinate Congress’s legislative agenda on the issue.

New AI legislation could also serve as a potential backstop to voluntary commitments that some AI companies made to the Biden administration earlier this year to ensure their AI models undergo outside testing before they are released to the public.

But even as US lawmakers prepare to legislate by meeting with industry and civil society groups, they are already months if not years behind the European Union, which is expected to finalize a sweeping AI law by year’s end that could ban the use of AI for predictive policing and restrict how it can be used in other contexts.

A bipartisan pair of US senators sharply criticized the meeting, saying the process is unlikely to produce results and does not do enough to address the societal risks of AI.

Connecticut Democratic Sen. Richard Blumenthal and Missouri Republican Sen. Josh Hawley each spoke to reporters on the sidelines of the meeting. The two lawmakers recently introduced a legislative framework for artificial intelligence that they said represents a concrete effort to regulate AI — in contrast to what was happening steps away behind closed doors.

“This forum is not designed to produce legislation,” Blumenthal said. “Our subcommittee will produce legislation.”

Blumenthal added that the proposed framework — which calls for setting up a new independent AI oversight body, as well as a licensing regime for AI development and the ability for people to sue companies over AI-driven harms — could lead to a draft bill by the end of the year.

“We need to do what has been done for airline safety, car safety, drug safety, medical device safety,” Blumenthal said. “AI safety is no different — in fact, potentially even more dangerous.”

Hawley called Wednesday’s sessions “a giant cocktail party” for the tech industry and slammed the fact that it was private.

“I don’t know why we would invite all the biggest monopolists in the world to come and give Congress tips on how to help them make more money, and then close it to the public,” Hawley said. “I mean, that’s a terrible idea. These are the same people who have ruined social media.”

Despite talking tough on tech, Schumer has moved extremely slowly on tech legislation, Hawley said, pointing to several major tech bills from the last Congress that never made it to a Senate floor vote.

“It’s a little bit like antitrust the last two years,” Hawley said. “He talks about it constantly and does nothing about it. My sense is … this is a lot of song and dance that covers the fact that actually nothing is advancing. I hope I’m wrong about that.”

Hawley is also a co-sponsor of a bill introduced Tuesday led by Minnesota Democratic Sen. Amy Klobuchar that would prohibit generative AI from being used to create deceptive political ads. Klobuchar and Hawley, along with fellow co-sponsors Coons and Maine Republican Sen. Susan Collins, said the measure is needed to keep AI from manipulating voters.

Massachusetts Democratic Sen. Elizabeth Warren said the broad nature of the summit limited its potential.

“They’re sitting at a big, round table all by themselves,” Warren said of the executives and civil society leaders, while all the senators sat, listened and didn’t ask questions. “Let’s put something real on the table instead of everybody agree[ing] that we need safety and innovation.”

Schumer said that making the meeting confidential was intended to give lawmakers the chance to hear from the outside in an “unvarnished way.”