UNITED NATIONS — Just a few years ago, artificial intelligence got barely a mention at the U.N. General Assembly’s convocation of world leaders.

But after the release of ChatGPT last fall turbocharged both excitement and anxieties about AI, it’s been a sizzling topic this year at diplomacy’s biggest yearly gathering.

Presidents, premiers, monarchs and cabinet ministers convened as governments at various levels are mulling or have already passed AI regulation. Industry heavy-hitters acknowledge guardrails are needed but want to protect the technology’s envisioned benefits. Outsiders and even some insiders warn that there also are potentially catastrophic risks, and everyone says there’s no time to lose.

And many eyes are on the United Nations as perhaps the only place to tackle the issue at scale.

The world body has some unique attributes to offer, including unmatched breadth and a track record of brokering pacts on global issues, and it’s set to launch an AI advisory board this fall.

“Having a convergence, a common understanding of the risks, that would be a very important outcome,” U.N. tech policy chief Amandeep Gill said in an interview. He added that it would be very valuable to reach a common understanding on what kind of governance works, or might, to minimize risks and maximize opportunities for good.

As recently as 2017, only three speakers brought up AI at the assembly meeting’s equivalent of a main stage, the “ General Debate.” This year, more than 20 speakers did so, representing countries from Namibia to North Macedonia, Argentina to East Timor.

Secretary-General António Guterres teased plans to appoint members this month to the advisory board, with preliminary recommendations due by year’s end — warp speed, by U.N. standards.

Lesotho’s premier, Sam Matekane, worried about threats to privacy and safety, Nepalese Prime Minister Pushpa Kamal Dahal about potential misuse of AI, and Icelandic Foreign Minister Thórdís Kolbrún R. Gylfadóttir about the technology “becoming a tool of destruction.” Britain hyped its upcoming “AI Safety Summit,” while Spain pitched itself as an eager host for a potential international agency for AI and Israel touted its technological chops as a prospective developer of helpful AI.

Days after U.S. senators discussed AI behind closed doors with tech bigwigs and skeptics, President Joe Biden said Washington is working “to make sure we govern this technology — not the other way around, having it govern us.”

And with the General Assembly as a center of gravity, there were so many AI-policy panel discussions and get-togethers around New York last week that attendees sometimes raced from one to another.

“The most important meetings that we are having are the meetings at the U.N. — because it is the only body that is inclusive, that brings all of us here,” Omar Al-Olama, the United Arab Emirates’ minister for artificial intelligence, said at a U.N.-sponsored event featuring four high-ranking officials from various countries. It drew such interest that a half-dozen of their counterparts offered comments from the audience.

Tech industry players have made sure they’re in the mix during the U.N.’s big week, too.

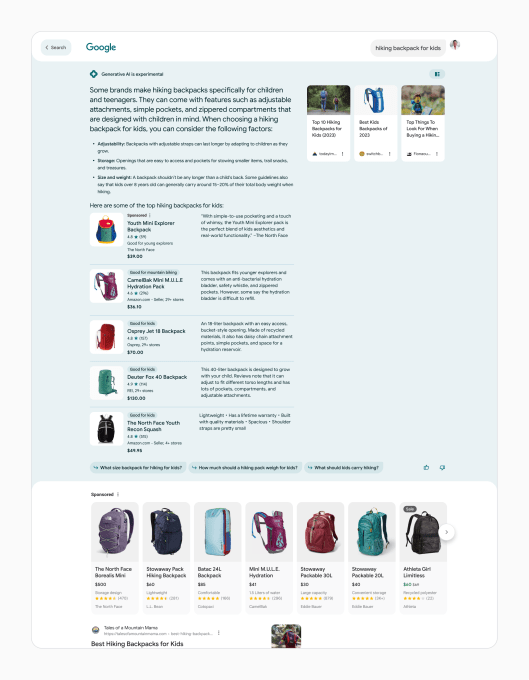

“What’s really encouraging is that there’s so much global interest in how to get this right — and the U.N. is in a position to help harmonize all the conversations” and work to ensure all voices get heard, says James Manyika, a senior vice president at Google. The tech giant helped develop a new, artificial intelligence-enabled U.N. site for searching data and tracking progress on the world body’s key goals.

But if the United Nations has advantages, it also has the challenges of a big-tent, consensus-seeking ethos that often moves slowly. Plus its members are governments, while AI is being driven by an array of private companies.

Still, a global issue needs a global forum, and “the U.N. is absolutely a place to have these conversations,” says Ian Bremmer, president of the Eurasia Group, a political risk advisory firm.

Even if governments aren’t developers, Gill notes that they can “influence the direction that AI takes.”

“It’s not only about regulating against misuse and harm, making sure that democracy is not undermined, the rule of law is not undermined, but it’s also about promoting a diverse and inclusive innovation ecosystem” and fostering public investments in research and workforce training where there aren’t a lot of deep-pocketed tech companies doing so, he said.

The United Nations will have to navigate territory that some national governments and blocs, including the European Union and the Group of 20 industrialized nations, already are staking out with summits, declarations and in some cases regulations of their own.

Ideas differ about what a potential global AI body should be: perhaps an expert assessment and fact-establishing panel, akin to the Intergovernmental Panel on Climate Change, or a watchdog like the International Atomic Energy Agency? A standard-setting entity similar to the U.N.’s maritime and civil aviation agencies? Or something else?

There’s also the question of how to engender innovation and hoped-for breakthroughs — in medicine, disaster prediction, energy efficiency and more — without exacerbating inequities and misinformation or, worse, enabling runaway-robot calamity. That sci-fi scenario started sounding a lot less far-fetched when hundreds of tech leaders and scientists, including the CEO of ChatGPT maker OpenAI, issued a warning in May about “the risk of extinction from AI.”

An OpenAI exec-turned-competitor then told the U.N. Security Council in July that artificial intelligence poses “potential threats to international peace, security and global stability” because of its unpredictability and possible misuse.

Yet there are distinctly divergent vantage points on where the risks and opportunities lie.

“For countries like Nigeria and the Global South, the biggest issue is: What are we going to do with this amazing technology? Are we going to get the opportunity to use it to uplift our people and our economies equally and on the same pace as the West?” Nigeria’s communications minister, Olatunbosun Tijani, asked at an AI discussion hosted by the New York Public Library. He suggested that “even the conversation on governance has been led from the West.”

Chilean Science Minister Aisén Etcheverry believes AI could allow for a digital do-over, a chance to narrow gaps that earlier tech opened in access, inclusion and wealth.

But it will take more than improving telecommunications infrastructure. Countries that got left behind before need to have “the language, culture, the different histories that we come from, represented in the development of artificial intelligence,” Etcheverry said at the U.N.-sponsored side event.

Gill, who’s from India, shares those concerns. Dialogue about AI needs to expand beyond a “promise and peril” dichotomy to “a more nuanced understanding where access to opportunity, the empowerment dimension of it … is also front and center,” he said.

Even before the U.N. advisory board sets a detailed agenda, plenty of suggestions were volunteered amid the curated conversations around the General Assembly. Work on global minimum standards for AI. Align the various regulatory and enforcement endeavors around the globe. Look at setting up AI registries, validation and certification. Focus on regulating uses rather than the technology itself. Craft a “rapid-response mechanism” in case dreaded possibilities come to pass.

From Dr. Rose Nakasi’s vantage point, though, there was a clear view of the upsides of AI.

The Ugandan computer scientist and her colleagues at Makerere University’s AI Lab are using the technology to streamline microscopic analysis of blood samples, the gold-standard method for diagnosing malaria.

Their work is aimed at countries without enough pathologists, especially in rural areas. A magnifying eyepiece, produced by 3D printing, fits cellphone cameras and takes photos of microscope slides; AI image analysis then picks out and identifies pathogens. Google’s charitable arm recently gave the lab $1.5 million.

AI is “an enabler” of human activity, Nakasi said between attending General Assembly-related events.

“We can’t be able to just leave it to do each and every thing on its own,” she said. “But once it is well regulated, where we have it as a support tool, I believe it can do a lot.”