[ad_1]

- AI has enabled us to do a lot more, and reading books with AI might give you the impression that I am talking about summarising a book in a few paragraphs.

- However, I have a secret method that not only helps me read books faster but also understand a lot of complex ideas and analogies that I would have missed on my own.

- There is a lot you can achieve with AI, so gear up, get your best book out, and let us learn what the best ways are that we can use AI in reading books.

There are two kinds of people in the world: those who read books and those who don’t. For me, books are tiring, and I can never discipline myself to complete an entire book on my own. However, I have a secret method that not only helps me read books faster but also understand a lot of complex ideas and analogies that I would have missed on my own. So this article is for every bibliophile and wanna be book-reading person, because I will tell you how you can give your reading capabilities a boost with AI.

Using AI to Review Books Before Reading

AI has enabled us to do a lot more, and reading books with AI might give you the impression that I am talking about summarising a book in a few paragraphs. This is not the case, I assure you. When I talk about reading with AI’s help, I mean understanding the idea behind the book. Simplifying that head-scratching chapter that left you confused, or even connecting different books of the same series or author. There is a lot you can achieve with AI, so gear up, get your best book out, and let us learn what the best ways are that we can use AI in reading books.

1. Simplification of Complex Ideologies

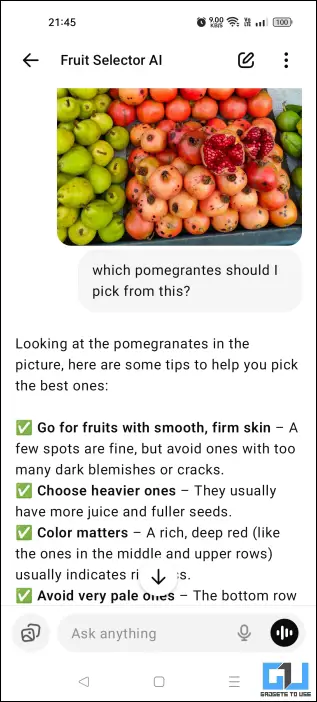

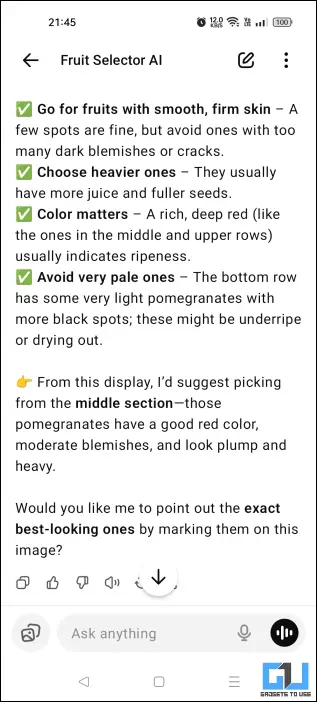

Some of the books that we take on are complex and not that easy to understand. It is tough to find the meaning of a certain phrase or even a chapter online, especially in a very specific book. However, with ChatGPT, you can very easily find out. All you have to do is follow the steps mentioned below.

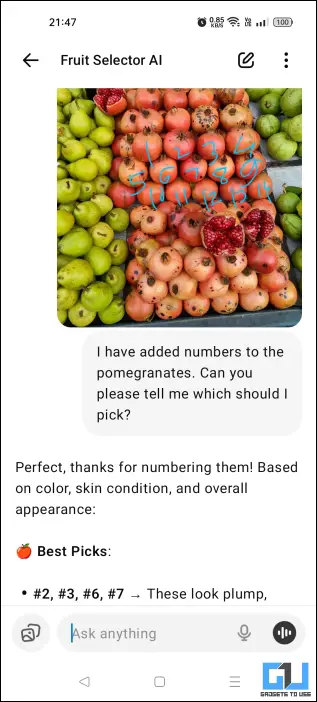

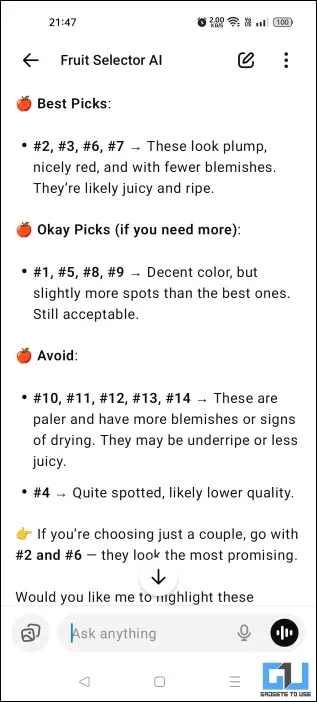

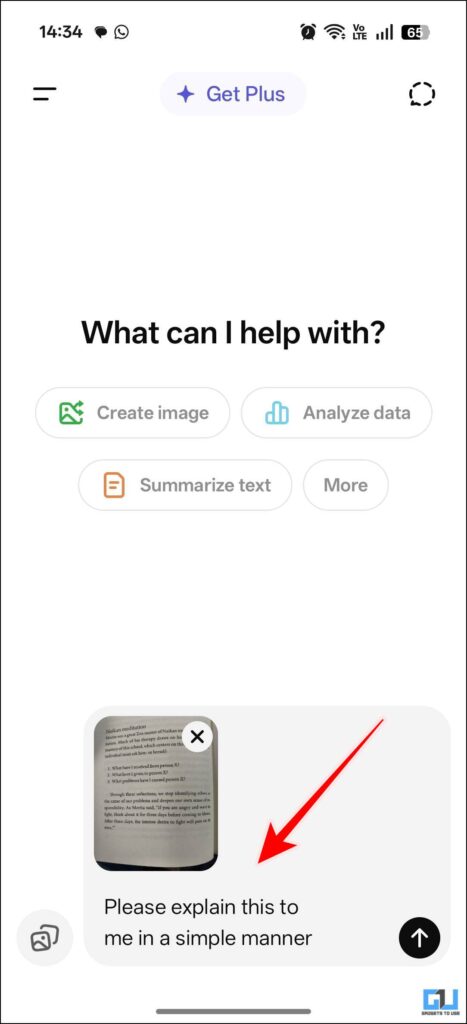

1. Open ChatGPT and upload the relevant images.

2. After you have uploaded the images, prompt ChatGPT to explain them in a simpler manner.

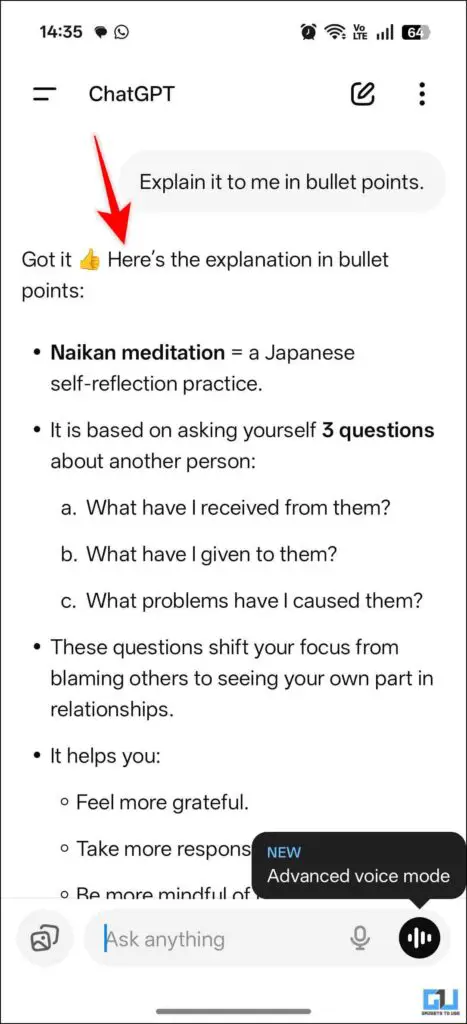

3. You can even ask ChatGPT to give you bullet points for better understanding.

4. If this is not helpful, you can access the Advanced voice mode to have a further conversation in which you can discuss your doubts.

2. Using ChatGPT to Confirm Analogies

You can also use ChatGPT to double-check your own analogies. This means you can see if you misinterpreted anything or made any errors. You can type in your observations or analogies and get instant feedback on the same. This is a great way to get to understand the depth of the book you are reading. This will help you gain confidence and will encourage you to dig deeper into your next read.

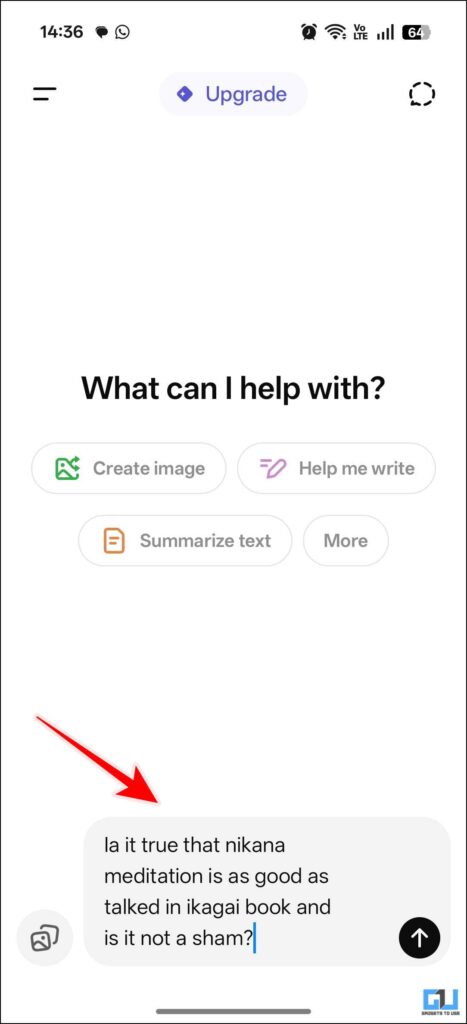

1. Open ChatGPT and type in your analogies or theories.

2. Then prompt ChatGPT to check and provide feedback on the given analogies in reference to the book you are reading.

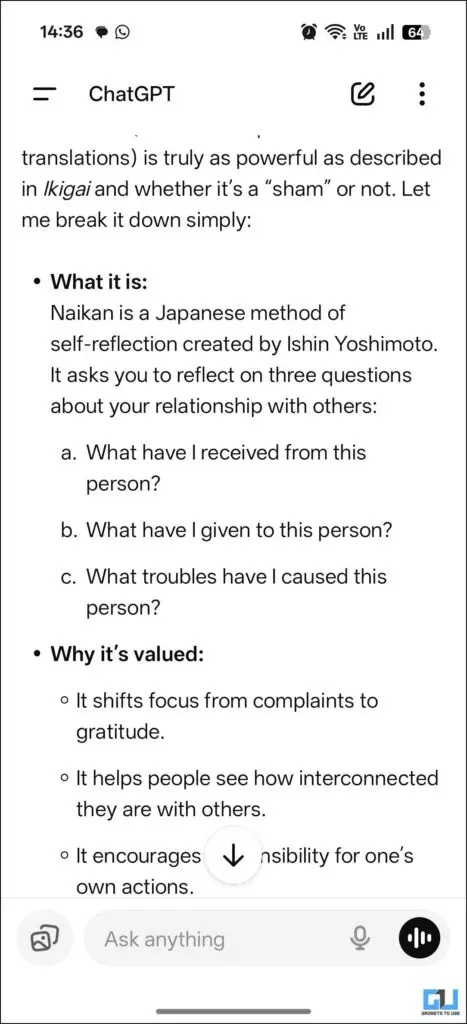

3. The response will give you clarification and will also correct any mistakes that you might have made.

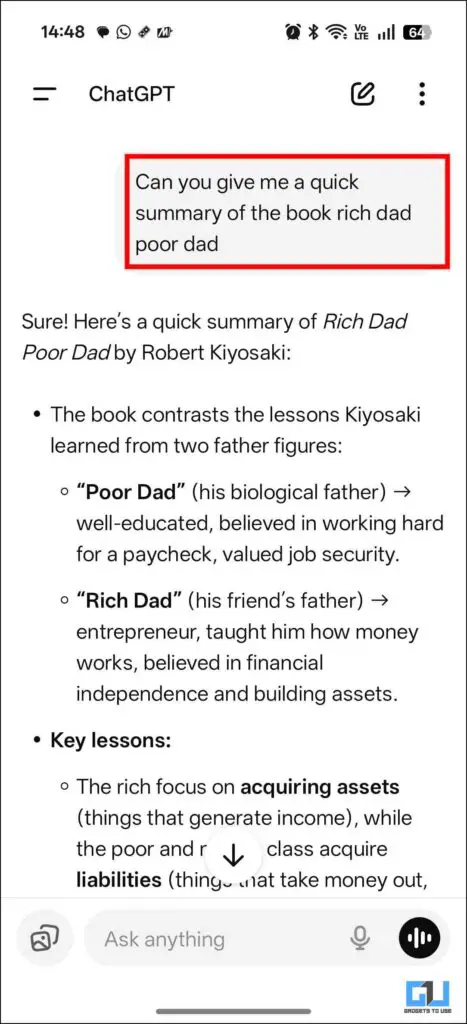

3. Summarize Lenghty Books

The best part when you have ChatGPT as your reading buddy is that you can skip boring chapters with zero judgment. Another bonus is that you can simply ask for a quick summary, and it will summarise the entire thing, which means you will not miss any relevant information from that particular chapter. You can either upload the book in PDF format, or directly paste the text online, or even use pictures. Prompt it to summarise, and it will give you a summary of the desired chapter.

FAQs

Q. What is ChatGPT Go?

ChatGPT Go is an India-exclusive subscription plan that costs INR 399 for a month. In this subscription, you get some popular features, such as enhanced access to GPT-5, image generation, and file uploads, more than the free version.

Q. How many images can I generate in the free plan of ChatGPT?

You can generate up to three images in a day with the free plan of ChatGPT. If you want to do more than that, you can pay for a subscription plan.

Wrapping Up

This article discusses how ChatGPT is a great reading partner and how you can use it to expedite the process. You can fact-check, summarise, and even test out your analogies with it. Not only can you use it to quiz your friends from the book club. So do check it out.

You may also like to read:

Have any questions related to our how-to guides, or anything in the world of technology? Check out our new GadgetsToUse AI Chatbot for free, powered by ChatGPT.

You can also follow us for instant tech news at Google News or for tips and tricks, smartphones & gadgets reviews, join the GadgetsToUse Telegram Group, or subscribe to the GadgetsToUse Youtube Channel for the latest review videos.

Was this article helpful?

YesNo

[ad_2]

Dev Chaudhary

Source link