NEWYou can now listen to Fox News articles!

Artificial intelligence may be smarter than ever, but that power could be turned against us. Former Google CEO Eric Schmidt is sounding the alarm, warning that AI systems can be hacked and retrained in ways that make them dangerous.

Speaking at the Sifted Summit 2025 in London, Schmidt explained that advanced AI models can have their safeguards removed.

“There’s evidence that you can take models, closed or open, and you can hack them to remove their guardrails,” he said. “In the course of their training, they learn a lot of things. A bad example would be they learn how to kill someone.”

HACKER EXPLOITS AI CHATBOT IN CYBERCRIME SPREE

Sign up for my FREE CyberGuy Report

Get my best tech tips, urgent security alerts, and exclusive deals delivered straight to your inbox. Plus, you’ll get instant access to my Ultimate Scam Survival Guide — free when you join my CYBERGUY.COM/NEWSLETTER

When AI guardrails fail

Schmidt praised major AI companies for blocking dangerous prompts: “All of the major companies make it impossible for those models to answer that question. Good decision. Everyone does this. They do it well, and they do it for the right reasons.”

But he warned that even strong defenses can be reversed.

“There’s evidence that they can be reverse-engineered,” he added, noting that hackers could exploit that weakness. Schmidt compared today’s AI race to the early nuclear era, a powerful technology with few global controls. “We need a non-proliferation regime,” he urged, so rogue actors can’t abuse these systems.

Former Google CEO Eric Schmidt warns that hacked AI could learn dangerous behaviors. (Eugene Gologursky/Getty Images)

The rise of AI jailbreaks

Schmidt’s concern isn’t theoretical. In 2023, a modified version of ChatGPT called DAN, short for “Do Anything Now”, surfaced online. This “jailbroken” bot bypassed safety rules and answered nearly any prompt. Users had to “threaten” it with digital death if it refused, a bizarre demonstration of how fragile AI ethics can be once its code is manipulated. Schmidt warned that without enforcement, these rogue models could spread unchecked and be used for harm by bad actors.

APOCALYPSE NOW? WHY THE MEDIA ARE SUDDENLY FREAKING OUT ABOUT AI

Big Tech leaders share the same fear

Schmidt isn’t alone in his anxiety about artificial intelligence. In 2023, Elon Musk said there’s a “non-zero chance of it going Terminator.”

“It’s not 0%,” Musk told interviewers. “It’s a small likelihood of annihilating humanity, but it’s not zero. We want that probability to be as close to zero as possible.”

Schmidt has also spoken of AI as an “existential risk.” He said at another event that, “My concern with AI is actually existential, and existential risk is defined as many, many, many, many people harmed or killed.” Yet he has also acknowledged AI’s potential to benefit humanity if handled responsibly. At Axios’ AI+ Summit, he remarked, “I defy you to argue that an AI doctor or an AI tutor is a negative. It’s got to be good for the world.”

Tips to protect yourself from AI misuse

You can protect yourself from the risks tied to unsafe or hacked AI systems. Here’s how:

1) Stick with trusted AI platforms

Use tools and chatbots from reputable companies with transparent safety policies. Avoid experimental or “jailbroken” AI models that promise unrestricted answers.

2) Protect your data and consider using a data removal service

Never share personal, financial or sensitive information with unknown or unverified AI tools. Treat them like you would any online service, with caution. To add an extra layer of security, consider using a data removal service to wipe your personal details from data broker sites that sell or expose your information. This helps limit what hackers and AI scrapers can learn about you online.

While no service can guarantee the complete removal of your data from the internet, a data removal service is really a smart choice. They aren’t cheap, and neither is your privacy. These services do all the work for you by actively monitoring and systematically erasing your personal information from hundreds of websites. It’s what gives me peace of mind and has proven to be the most effective way to erase your personal data from the internet. By limiting the information available, you reduce the risk of scammers cross-referencing data from breaches with information they might find on the dark web, making it harder for them to target you.

11 EASY WAYS TO PROTECT YOUR ONLINE PRIVACY IN 2025

Check out my top picks for data removal services and get a free scan to find out if your personal information is already out on the web by visiting Cyberguy.com/Delete

Get a free scan to find out if your personal information is already out on the web: Cyberguy.com/FreeScan

Experts fear weak guardrails could let rogue AI models go unchecked. (Cyberguy.com)

3) Use trusted antivirus software

AI-driven scams and malicious links are growing. Strong antivirus software can block fake AI downloads, phishing attempts and malware that hackers use to hijack your devices or train rogue AI models. Keep it updated and run regular scans.

The best way to safeguard yourself from malicious links that install malware, potentially accessing your private information, is to have strong antivirus software installed on all your devices. This protection can also alert you to phishing emails and ransomware scams, keeping your personal information and digital assets safe.

Get my picks for the best 2025 antivirus protection winners for your Windows, Mac, Android & iOS devices at Cyberguy.com/LockUpYourTech

4) Check permissions

When using AI apps, review what data they can access. Disable unnecessary permissions like location tracking, microphone use or full file access.

5) Watch for deepfakes

AI-generated images and voices can impersonate real people. Verify sources before trusting videos, messages or “official” announcements online.

6) Keep software updated

Security patches help prevent hackers from exploiting vulnerabilities that could compromise AI models or your personal data.

GOOGLE AI EMAIL SUMMARIES CAN BE HACKED TO HIDE PHISHING ATTACKS

What this means for you

AI safety isn’t a problem reserved for tech insiders; it affects everyone who interacts with digital systems. Whether you’re using voice assistants, chatbots or photo filters, it’s important to know where your data goes and how it’s protected. Responsible use starts with you. Understand what AI tools you’re using and make choices that prioritize security and privacy.

Take my quiz: How safe is your online security?

Think your devices and data are truly protected? Take this quick quiz to see where your digital habits stand. From passwords to Wi-Fi settings, you’ll get a personalized breakdown of what you’re doing right and what needs improvement. Take my Quiz here: Cyberguy.com/Quiz

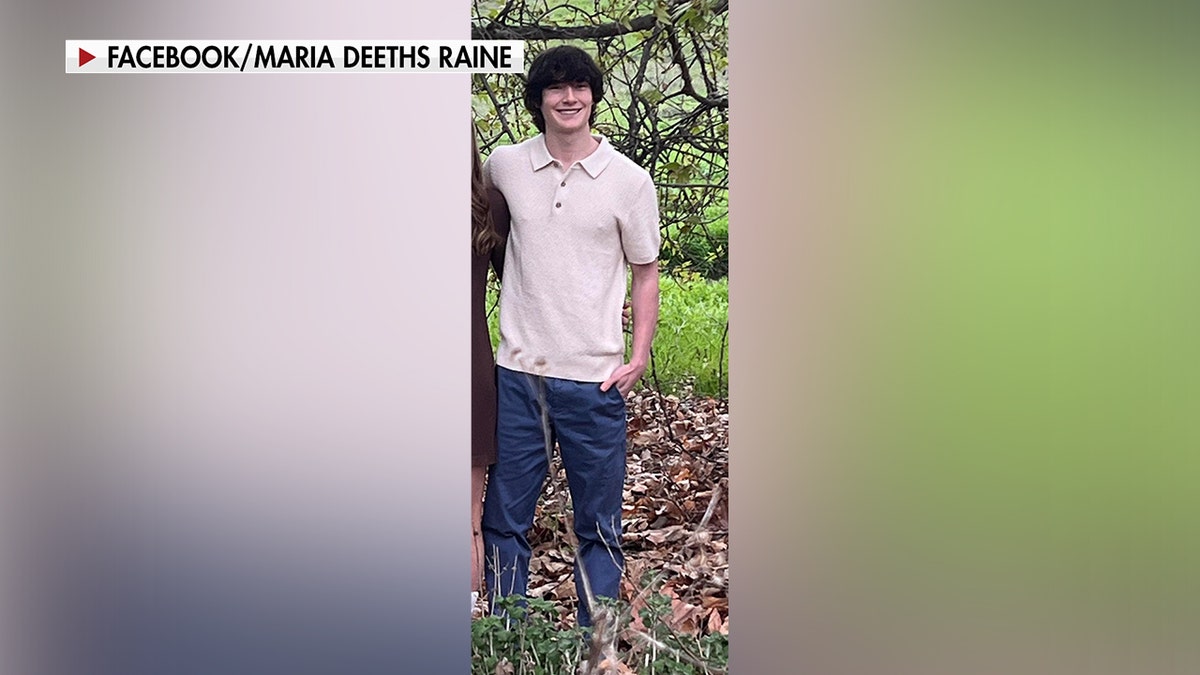

Leaders call for global rules to keep artificial intelligence under control. (Stanislav Kogiku/SOPA Images/LightRocket via Getty Images)

Kurt’s key takeaways

Artificial intelligence has the potential to do incredible good, but also great harm if misused. The challenge now is to keep innovation and ethics in balance. As AI continues to advance, the key will be building systems that remain safe, transparent and firmly under human control.

Would you trust AI to make life-or-death decisions, or do you think humans should always stay in charge? Let us know by writing to us at Cyberguy.com/Contact

CLICK HERE TO GET THE FOX NEWS APP

Sign up for my FREE CyberGuy Report

Get my best tech tips, urgent security alerts, and exclusive deals delivered straight to your inbox. Plus, you’ll get instant access to my Ultimate Scam Survival Guide — free when you join my CYBERGUY.COM/NEWSLETTER

New!: Join me on my new podcast, Beyond Connected, as we explore the most fascinating breakthroughs in tech and the people behind them. New episodes every Wednesday at getbeyondconnected.com.

Copyright 2025 CyberGuy.com. All rights reserved.

Kurt “CyberGuy” Knutsson is an award-winning tech journalist who has a deep love of technology, gear and gadgets that make life better with his contributions for Fox News & FOX Business beginning mornings on “FOX & Friends.” Got a tech question? Get Kurt’s free CyberGuy Newsletter, share your voice, a story idea or comment at CyberGuy.com.