[ad_1]

Tech giants’ efforts to ramp up AI adoption in India may be about to hit a turning point, as companies end free promotions with hopes to convert the world’s fourth-largest economy into a windfall of paid subscribers.

India became the world’s largest market for generative AI app downloads in 2025, according to market intelligence firm Sensor Tower, widening its lead over the U.S. as installs jumped 207% year-over-year.

Companies including OpenAI, Google, and Perplexity rolled out extended free premium offers to accelerate user growth in the price sensitive market. Leading AI firms have also backed India in its push to become a global artificial intelligence hub. A major AI summit in New Delhi last week was attended by leaders including OpenAI’s Sam Altman, Anthropic’s Dario Amodei, and Alphabet CEO Sundar Pichai — a sign of the country’s growing weight in the global AI race.

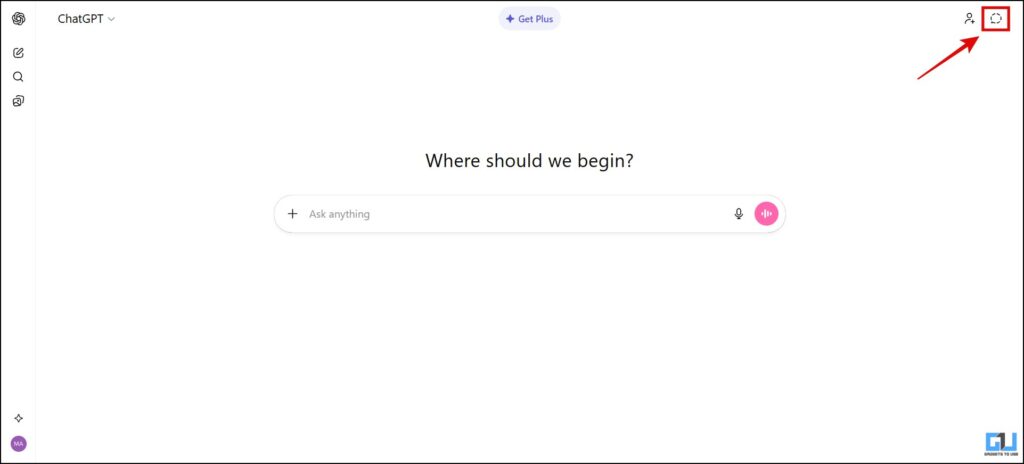

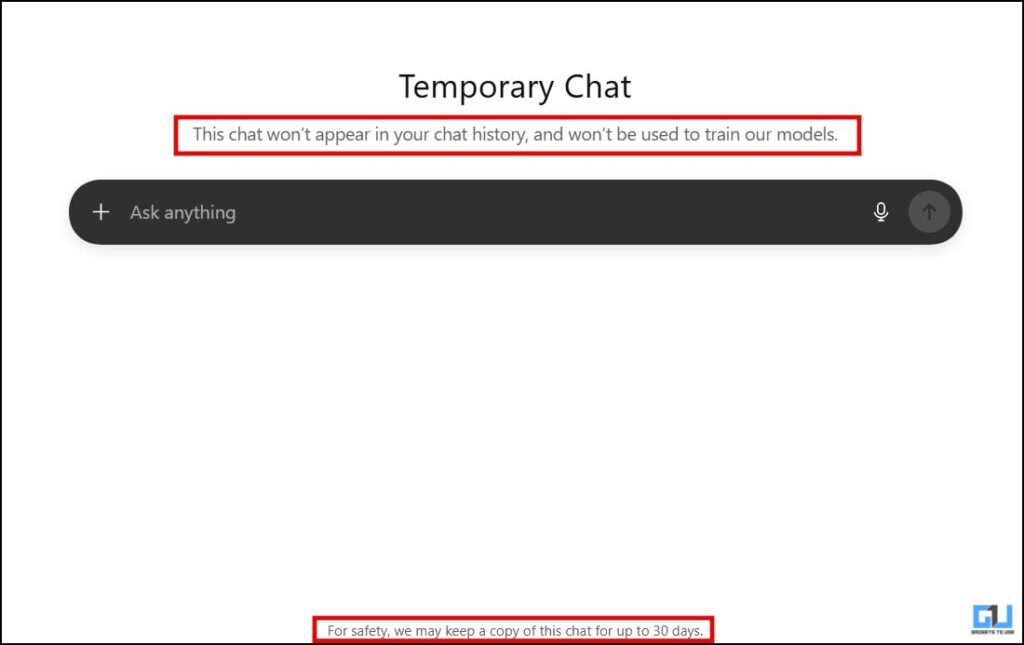

Now, some of those early promotional pushes are winding down. Perplexity ended its bundled Pro offer with Indian telco Airtel in January, while OpenAI’s free ChatGPT Go access in India is no longer available, potentially setting the stage for a clearer test of how many newly acquired users convert to paying subscribers.

Despite strong download growth, India still generates a disproportionately small share of AI app revenue, accounting for about 1% of in-app purchases even as it drives roughly 20% of global GenAI app downloads, according to the Sensor Tower data shared with TechCrunch, highlighting the monetization challenge in one of the industry’s fastest-growing markets.

GenAI app adoption in India accelerated sharply through 2025, with downloads peaking in September and October at year-over-year growth rates of about 320% and 260%, respectively, according to the data. Yet the surge in usage did not fully translate into revenue gains. In November and December 2025, AI app in-app purchase revenue in India fell 22% and 18% month over month, respectively. ChatGPT’s revenue dropped even more sharply — down 33% and 32% over the same period following the November launch of free sub-$5 ChatGPT Go access — reflecting the near-term impact of aggressive promotional pushes.

ChatGPT still commands more than 60% of GenAI in-app revenue in India, meaning shifts in its pricing strategy can significantly influence overall market performance.

Techcrunch event

Boston, MA

|

June 9, 2026

Alongside promotional pushes, Sensor Tower attributed the surge in GenAI app adoption in India last year to a mix of new product launches, including the debut of platforms such as DeepSeek, Grok, and Meta AI, as well as upgrades to major chatbots like ChatGPT, Gemini, Claude, and Perplexity. Viral interest in AI-generated content also helped fuel adoption, with content creation and editing tools accounting for seven of the 20 most downloaded GenAI apps in India in 2025.

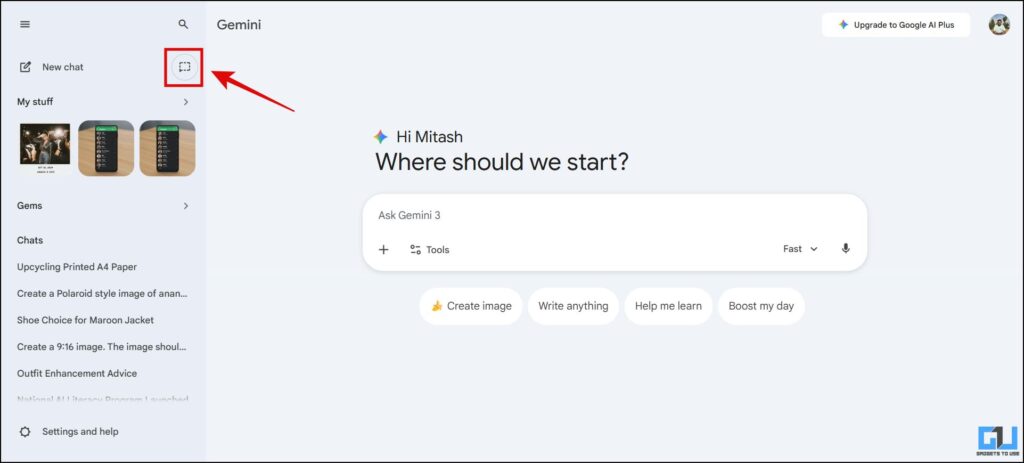

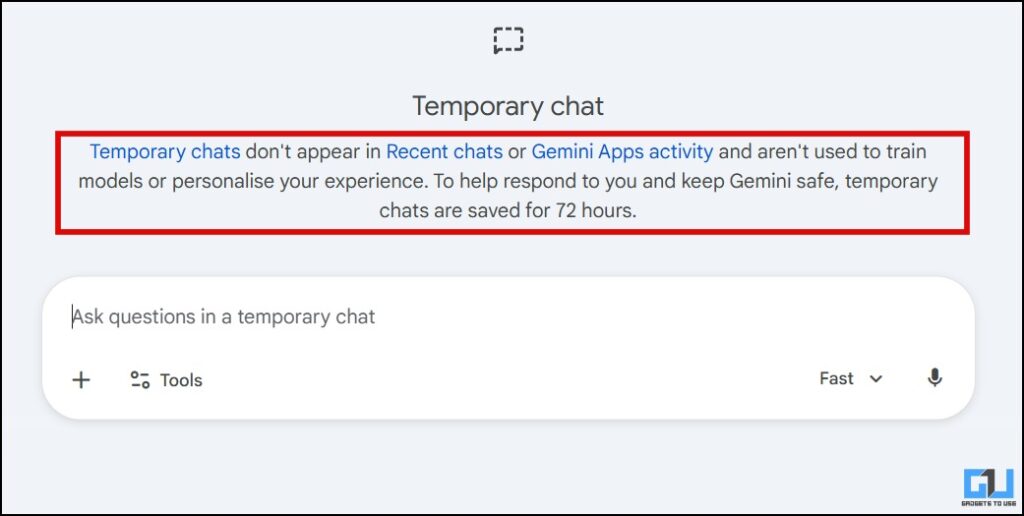

The user surge has been equally pronounced. India accounted for about 19% of the global user base of leading AI assistant apps in 2025, ahead of the U.S. at 10%, Sensor Tower said. ChatGPT continues to dominate the Indian market by monthly active users, though rivals including Google’s Gemini and Perplexity have also seen rapid growth following promotional offers. ChatGPT was the most downloaded GenAI app in India and globally in 2025, according to earlier Sensor Tower data. Earlier this month, OpenAI’s CEO said that the chatbot now has more than 100 million weekly active users in India.

The promotional push in India reflects a broader strategy by AI firms to reduce pricing friction in a highly value-conscious market, betting that early user adoption and engagement will translate into stronger long-term retention once free access periods expire, said Sneha Pandey, insights analyst at Sensor Tower.

India’s appeal lies in its massive digital base. The country has more than a billion internet users and around 700 million smartphone owners, making it one of the largest potential markets for AI services globally and a critical battleground for user growth.

Nonetheless, user engagement in India still trails more mature markets. In 2025, users of leading AI chatbot apps in the U.S. spent about 21% more time per week on the apps than their counterparts in India and logged 17% more sessions on average, per Sensor Tower.

“AI in-app revenues will likely see meaningful but gradual improvement as users become more deeply integrated into these platforms, making sustained engagement paramount,” Pandey told TechCrunch.

She added that pricing pressure in India is likely to remain elevated given the country’s young and value-conscious user base, making lower-cost tiers, telecom bundles, and micro-transaction models important for long-term retention.

ChatGPT remained the clear market leader in India entering 2026, with 180 million monthly active users in January, per Sensor Tower, followed by Google’s Gemini with 118 million, Perplexity with 19 million, and Meta AI with 12 million. The figures underline both the scale of India’s AI opportunity and the growing challenge for firms to convert rapid user adoption into sustained revenue.

Google, OpenAI, and Perplexity did not respond to requests for comments.

[ad_2]

Jagmeet Singh

Source link