There are so many brewing methods to choose from (French press, the currently trendy dalgona whipped, pour-over), but many coffee lovers still rely on the classic, automatic drip for their daily fix. That’s why we tested the best-rated drip coffee makers using a wide range of criteria (outlined below) over the course of several weeks. Bags upon bags of dark roast, light roast and medium roast coffee beans were ground and brewed. We made full carafes, half carafes and single cups. And we tasted the results black, with cow’s milk, almond milk, sweetened condensed milk, cold-brew strength over ice — you name it.

Many, many pots of coffee later, we settled on four standout drip coffee machines.

Best drip coffee maker overall

The Braun KF6050WH BrewSense Drip Coffee Maker produced consistently delicious, hot cups of coffee, brewed efficiently and cleanly, from sleek, relatively compact hardware that is turnkey to operate, and all for a reasonable price.

Runner-up with a modern bent

This was, to our eye, the most handsome and minimally designed of the straightforward auto-brewers, delivering a clean, tasty cup. It lost first place only because the touchscreen may not be for every consumer, and brew time is significantly longer than the other machines we tried out.

Luxury pick for the design-obsessed

In just near five minutes, the Technivorm Moccamaster 59636 KBG Coffee Brewer turns out a whole pot of pretty perfectly brewed coffee, and the process is as entrancing as a targeted Netflix trailer.

Best affordable drip coffee maker

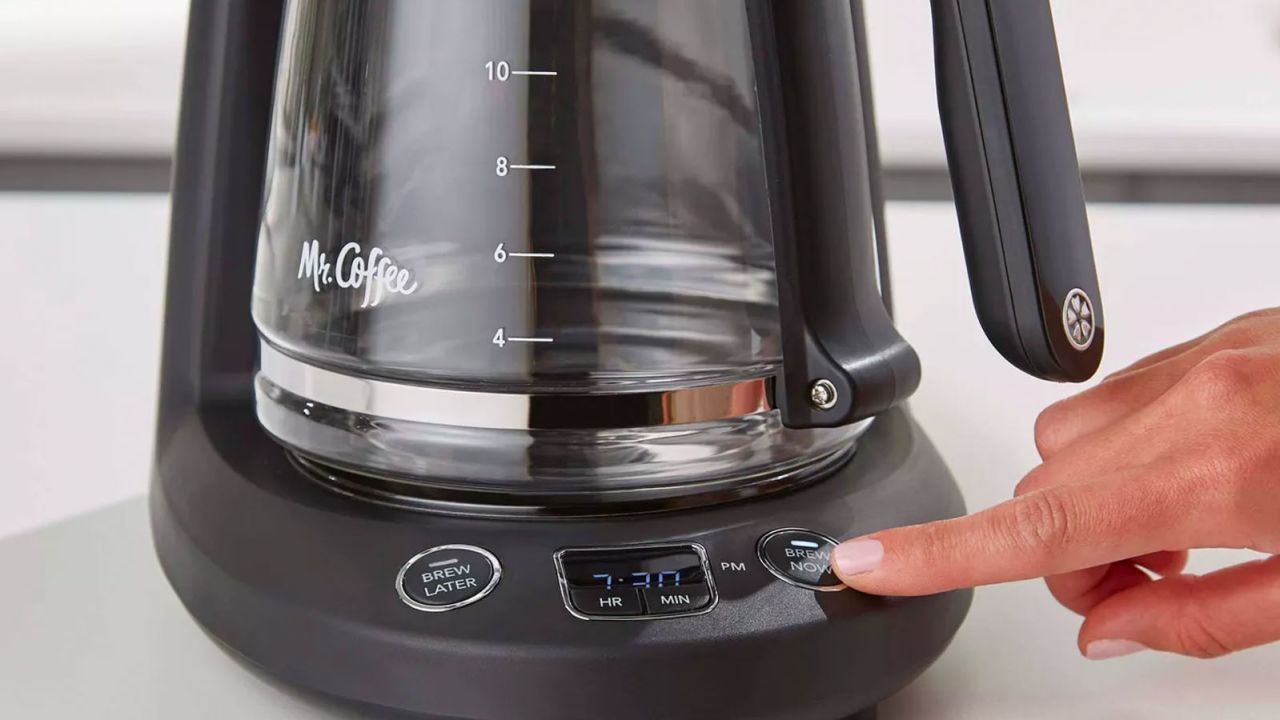

One of the cheapest options we tested, the Mr. Coffee 12-cup brewer is compact, simple to operate and yields a very competitive cup.

We brewed countless pots of coffee with the BrewSense, ranging from light to dark roast, and each one yielded a strong, delicious cup with no sediment, thanks to the gold tone filter, designed to remove the bitterness from coffee as well reduce single-use paper-filter waste. The machine we tested was white — a nice option for those with a more modern kitchen design — but it also comes in black, and it’s compact enough to fit under the cabinets in a smaller space compared to some of the more cumbersome machines we tested.

The BrewSense is straightforward to operate: It’s designed like a traditional automatic drip machine with manual operating buttons, but with a sleek, modern upgrade. The hardware is a sophisticated combination of brushed metal and plastic, with a glass carafe that feels comfortable in the hand.

The BrewSense doesn’t have a lot of bells and whistles compared to some of the machines we tested, and that functional ease helped elevate it to the top of our list. You could unbox this machine, flush it through with water once, and be drinking a freshly brewed cup within 15 minutes, all without reading the manual. Brewing is also a nearly silent process, which can be pleasing on early mornings. Some consumers may want a machine loaded with special features, but for those who just want delicious, hot coffee every morning, without spending over a hundred bucks, this is your best bet.

The BrewSense isn’t perfect: It’s not the fastest we tested — to brew a full pot of 12 cups took upwards of 11 minutes. And we found an annoying error in the instruction manual around how to program the clock (call us rigid, but we insisted on programming the time before using each of the machines!); the directions read to press and hold CLOCK and then SET, but that didn’t work. We had to simply press and hold the CLOCK button and then sort of trial and error our way through the hours and minutes. Meanwhile, the auto-program setup is not as obvious as we’d have liked; though once we got it, it worked like a dream. But otherwise, we found this machine intuitive and easy to operate even without the instruction manual.

Cleanup could at times be a little messier than some of our other machines. The hot water comes up through the filter basket and spreads the grounds up to the top of the cone, and during one brewing, a tiny bit rose up outside the cone so the top of the brew apparatus needed a little wipedown. Overall, though, for less than $80, this machine delivers the best bang for your buck of anything on the market.

Coming in just a few points behind the Braun BrewSense was one of the three Cuisinart automatic drip machines we tested: the Touchscreen 14-Cup Programmable.

We rated all three Cuisinarts highly, but the Touchscreen ranked highest for its combination of progressive design and everyday efficacy. All the Cuisinart products we encountered were well designed, but this one feels special, like when you unbox a brand-new Apple product: Its all-black, shiny surfaces and touchscreen control panel look and feel next-level for an everyday coffee maker (and the price, $235 at Macy’s, more than three times that of the Braun, reflects that).

But this isn’t just a fancy, aesthetically pleasing machine: It brewed strong, delicious coffee that tasted cleanly filtered but rich. It’s also relatively easy to program and use, given its tech-centric platform. The touchscreen panel features cute little icons signifying one-touch commands to help customize your brew: If you like your coffee bolder, you can select the BOLD feature; if you’re brewing less than half a pot, select the 1 to 4 cups feature for a slower brew with the proper extraction time; adjust the hot plate temperature to low, medium or high; turn the audible brew-cycle-finished tone on or off.

That tech-centric design is also one of the reasons this didn’t come in at number one, however. As exciting and different as it felt, we did feel that this machine — the only touchscreen model we tested — would feel less intuitive and more laborious than some consumers would want as part of their morning coffee routine. The touchscreen goes dark during the brew process, which yes, is nice-looking, but also feels a bit jarring, like you’re literally in the dark, asking yourself, “What’s going on? Is coffee brewing?” The settings and operating buttons are clear enough when illuminated, but it did take us a few times brewing to get used to how much pressure you need to apply with your fingertip to the touchscreen. We could easily think of people in our own lives who would be flummoxed by this machine if left alone with it and a bag of coffee — and for that, it lost a few points in functionality.

Also, like its Cuisinart cousins we tested, this one’s a slower brewer. We clocked 11 minutes for eight cups, and if you’re watching your coffee maker brew like, well, a watched pot, it seems like it … takes forever. We understand the appeal of a slower brewing process (pour-over and Chemex fans, we hear you!), but 12 to 14 minutes for a full pot of coffee seems like a long time to wait when you’re thirsty for your morning Joe and you’re not doing it by hand. Finally, not everyone will want to spend more than $200 on a coffee maker. But many may.

While some consumers might be flummoxed by the technology of this higher-end product, others will embrace it and make it a centerpiece of their kitchen, and rightly so. Form plus function equals morning happiness here.

We had heard about the Technivorm Moccaster, a machine beloved for its innovative and old-school industrial design, handmade and tested in the Netherlands since 1968, even before we received it for this story. Multiple friends reached out upon hearing that we were testing a Moccamaster, singing the brand’s praises, and one declared it superlative via Instagram DM: “Moccamaster? Test over!” And the Moccamaster arrives with its own best PR too. Its user manual applauds buyers: “Congratulations on your purchase of the World’s Finest Coffee Brewer!” (If you’re spending more than $300 on a coffee maker, perhaps the enthusiasm feels validating.)

Once we got the apparatus set up — which takes a little focus and time, to be honest — it really did pay off, with possibly the most delicious, hot, fresh cup of coffee we have ever tasted from a home-brewed machine. What’s more, you barely have time to peruse the morning news headlines before the process is done. The Moccamaster brewed 10 cups in less than six minutes, and, on a second trial, six cups in under four minutes. The brew function is almost jarringly fast: Once you turn on the machine, the brewing starts immediately. Then, seeing the water heat in the tank and bubble up through the water transfer tube into the brewer was a throwback to middle-school science experiments in the most pleasing way, like if a lava lamp produced fresh hot coffee after a few mesmerizing undulations.

We discovered much to love about the Moccamaster, but there also were elements we didn’t adore. Perhaps ironically, they’re about the design. Some love a more hands-on coffee-making process, but some might find that there are just too many moving parts here, literally. We needed to read the directions pretty closely to assemble the parts. Once assembled, and once we digested what was happening brew-process-wise, the machine became fairly easy to operate.

But each time you use this machine, you have to take the brew basket apart to add a new paper filter (yes, it requires a paper filter, if that makes a difference to you) and coffee grounds, and that basket removal sometimes disrupts the outlet arm and the reservoir lid — not a huge deal, but it could feel like you have to put your coffee maker back together from scratch every morning. Also, the basket lid and outlet arm, through which the hot water travels from the tube to the brew basket, get very hot during the process. It’s fine if you’re aware and cautious, but you wouldn’t want someone to wander up and unknowingly touch the hot part of the brewer.

And finally, perhaps our most significant beef with this model: When you return the glass carafe to hotplate in between pours, the glass scrapes the warmer in a slightly cringey way.

The coffee that this striking machine yields, though, may diminish other distractions — we found ourselves moving this maker back to the kitchen counter time and again, because the brew process and its results were superior. If you, like us, are a fan of the Moccamaster, you’re likely to be one for many years to come, which will amortize the steep price tag accordingly.

We won’t go on and on about the Mr. Coffee 12-Cup, but it brewed a very workable 12 cups, in both taste and temperature, in just nine minutes. The machine came packaged in some pretty intense plastic and cardboard — the unboxing took a full five minutes and a pair of scissors — but once separated from its packaging, this machine’s a breeze to put together. The hardware is very easy to use (and to program to brew at a specific time), even without reading the directions. It’s compact — one of the best small drip coffee makers we tested — and durable, and the lid, brew basket, carafe and removable top half are all dishwasher safe, which wasn’t common among the machines we tested.

The testing process for these coffee makers was intensive, lasting more than a month. We evaluated each machine based on what would be most important to the user — namely, functionality, durability and design. We tested each machine at least twice (but four to eight times for some) with both dark and light roast freshly ground beans, did a programmed/timed brew when available, and tested the additional functions of the more specialty machines (single-cup, cold brew, tea, milk frothing). We jotted notes about every machine’s unboxing, read every instruction manual, handled and rehandled the hardware, timed the brew of each machine, noted the temperature of the resulting coffee, and tasted and had others taste and weigh in on user experience. We tried to get as acquainted as possible with each of these machines, became fond of a good many of them — and as a result, we drank way too much coffee over the month in question.

Read on for the categories and their breakdowns.

Brew function

- Optimal temperature: We didn’t take the actual temperature of the coffee from each machine, because we don’t think that’s how the average coffee drinker evaluates home brewing — experts recommend that coffee be brewed at between 195 and 205 degrees Fahrenheit, and served immediately, at 180 to 185 degrees — but we scored the perceived temp of each brew against all the others. We tasted each cup immediately after brewing, black, and then with added cold milk, and recorded the results.

- Taste: The taste of coffee is, obviously, subjective. Two people could spend a lifetime tasting the different coffee varietals and never agree on one. That being said, we tested each machine with both a dark roast and a light roast, keeping the amount of grounds consistent to the machine’s directions. As a result, some machines that recommended using more grounds yielded stronger brews — in those instances, we retested those with less grounds accordingly.

- Time to brew: For each carafe brewed, we timed the process on an iPhone timer, both for a full carafe and half. For those machines that made single cups, we timed that process as well.

- Heat retention: We noted whether the machine brewed into a glass or a thermal carafe, and how hot the coffee remained a half hour to an hour after brewing.

- User-friendliness: We did an initial scan of each machine, evaluating whether a new customer would be able to brew coffee without reading the instruction manual. We then assessed whether the design of each machine is immediately intuitive, and on a more micro level, assessed the settings and buttons on the face of the machine, the markings on the water tank and carafe, how easy the carafe is to fill, and the design of the brew basket.

- Volume yield: We noted how many ounces each machine can brew.

- Programmability: We recorded whether you can program the machine to brew at a set time.

Durability

- Everyday durability: For this category, we assessed how the machine responded to being handled during setup, filling the water tank, adding the grounds, removing and replacing carafe to serve, cleanup, and how durable the hardware felt.

- Build quality: We noted what materials the machine is built from, e.g., plastic, metal, brushed metal, glass, and the tangible feel of each machine in a user’s hands.

- Serviceability: We noted the ease of opening and taking apart the removable parts of each machine, in the case it would need to be serviced.

Setup and breakdown

- Ease of assembly: We observed how long it took to unbox the machine, put it together, and do an initial water flush before the product could be used.

- Size of machine: We assessed how much counter space each machine took up, and how easy it is to move and store.

- Ease of clean: After each brewing, we took note of how easy it was to clean the brew basket, the carafe, and the surrounding hardware.

Aesthetic

- First impression: We observed our first impression of each machine, noting details of design, color, size, feel — whether this machine looked attractive on our counter.

- Color options: We researched if the machine came in any colors besides black.

Warranty

- We checked the number of years of warranty of each machine.

Ninja Hot and Cold Brewed System

We tested two Ninja machines, both of which have some very appealing features. The hot and cold brew system brewed an excellent pot of hot coffee in less than five minutes, as well as a very tasty single cup (in multiple sizes), a less easy feat to perfect. It also brews coffee intended to be served directly over ice, an option that lots of consumers will like. We love the cool, minimalist glass carafe, though the lid features a big hole in the middle for pouring, which can lead to some splashing.

This machine, though prolific in function, lost points because the water tank — plastic with prominent ridges — feels cheap and devolves the user experience a bit (with this machine, thankfully, the plastic tank is in the back, hidden from view, but does need to be handled every time you add water). Another problem with this machine: The water tank doesn’t have marking measurements, only half carafe, and full carafe, and two sizes of single cup. Without ounce or cup markings, how does one know how much water to add versus amount of coffee grounds? The Ninja machines come with a special-sized coffee scoop, different amounts on each end of the scoop, but it was bothersome that the water and the coffee amounts couldn’t be more standardized without relying only on the provided removable accessories (which, for the record, are cute — there’s a removable frothing wand). A lot of performance features with this machine also means a busy control panel that also feels a bit high-maintenance.

The Ninja Specialty is similar to the hot and cold brewed one, with one major difference: The water tank is adjacent to the brew basket, and visible to the eye. This one also brews a very nice cup of hot fresh coffee, and has nifty added functions, too, like myriad sizes of individual cups, half and full carafes, and an over-ice option. The placement of the water tank front and center here, though, makes this one less appealing than the hot and cold option; the tank, similarly, feels flimsy and cheap, a factor that’s difficult to overlook in user experience. For those who like the Ninja brand products (they make blenders and other items), though, there’s a lot of function for your buck here.

The most basic of the Cuisinart options we tested, this one brewed a nearly perfect cup at, for this reviewer, a perfectly hot temp (even after adding significant cold milk, we still had a steaming hot cup), thanks to an adjustable carafe temp. This machine is solid and well-designed, with one downside (for us): Brewing time was 14 minutes for eight cups, nearly double the time of some of the other brewers we tested.

Cuisinart Coffee Center 10-Cup Thermal Coffee Maker and Single Serve Brewer

Our third Cuisinart brews only 10 cups into a thermal carafe, but has the handy bonus feature of a single-serve brew — with an attachment to use prepackaged coffee pods, or an adorable mini filter to use fresh grounds. (Note: The mini filter is a bit of a chore to clean because it is so small.) Like its Cuisinart siblings above, this machine makes good coffee, but the single-serve brewer does make the whole of the hardware more cumbersome. One annoying design issue: There’s an on/off switch on the side of the machine, whose placement feels not intuitive.

The De’Longhi TrueBrew is a superautomatic machine, meaning it incorporates a grinder so you can start with whole beans and have it do the rest for you. At the touch of a button, it can produce a single cup or a whole pot of coffee. The TrueBrew is incredibly convenient, but it’s quite expensive, and despite its wide range of options in our testing we got better tasting coffee from dedicated pour-over coffee and espresso setups. Unless you really want the ease of use of a pod machine along with the ability to use fresh whole beans it may not be for you.

The most affordable automatic drip machine we tested, the Black & Decker 12-cup, is also a solid choice. It brewed eight tasty cups in eight short minutes — overall a good user experience. Hardware-wise, it felt a bit less durable than its closest rival, the Mr. Coffee, but it’s programmable and super easy for near the cost of two lattes with an extra shot.

The Bonavita Connoisseur has its fans, but we had multiple issues with the machine. This pleasingly retro-looking apparatus brews a nice cup quickly and at a good temperature, but the user experience leaves much to be desired. Simply put, the design feels flawed. The lid of the carafe needs to be removed before brewing, so the coffee just brews directly into a wide-open carafe — this was so counterintuitive to us, even after three or four brew tries, that it diminished the experience of the brew process. The brewer also gets very hot during brewing — so hot that we wondered if it might actually be a safety issue. Lastly, after brewing, we screwed the carafe lid back on and tried to return the carafe to underneath the brewer — sure, maybe we were still sleepy, maybe not enough caffeine yet — but the carafe doesn’t fit under the brewer with the lid on; the entire top of the machine popped off. This affects storage of the machine, too; because the carafe lid and the brew basket don’t both fit into the hardware at the same time, there’s always one piece loose.

We were giddy upon opening this fancy brewer with much to offer: standard brew, fast, gold (what even is that, I wondered at first glance!), cold brew, single cup (with a sold separately attachment), and a customizable to your preferences setting. The options are exciting, but also overwhelming. The user is prompted to enter the consistency of their water, on a hard to soft scale — do all home coffee drinkers know the texture of their tap water? Also, does the average coffee drinker know what Gold Cup certification is? These feel like niche details for an automatic drip machine.

Big picture, the Breville brewed a good pot of coffee, quite quickly, but we didn’t find it hot enough. The whole apparatus is beautifully designed, with sleek brushed metal and a lightweight, handsome carafe lovely enough to join a brunch table. But digging in further, we found this machine just to be… well, just a little too much. Too much hardware — it doesn’t fit easily under our cabinets. Too many options — we needed to read up on a bunch of coffee wisdom before we could even set up the machine to our preferences. There are lots of users who would find this machine the sweet spot of function and sophistication, and enjoy exploring all of its specialties, but for those looking for turnkey coffee-making, this is a little extra.

Read more from CNN Underscored’s hands-on testing: