[ad_1]

Anthropic, one of the world’s best-funded generative AI startups with $7.6 billion in the bank, is launching a new paid plan aimed at enterprises, including those in highly regulated industries like healthcare, finance and legal, as well as a new iOS app.

Team, the enterprise plan, gives customers higher-priority access to Anthropic’s Claude 3 family of generative AI models plus additional admin and user management controls.

“Anthropic introduced the Team plan now in response to growing demand from enterprise customers who want to deploy Claude’s advanced AI capabilities across their organizations,” Scott White, product lead at Anthropic, told TechCrunch. “The Team plan is designed for businesses of all sizes and industries that want to give their employees access to Claude’s language understanding and generation capabilities in a controlled and trusted environment.”

The Team plan — which joins Anthropic’s individual premium plan, Pro — delivers “greater usage per user” compared to Pro, enabling users to “significantly increase” the number of chats that they can have with Claude. (We’ve asked Anthropic for figures.) Team customers get a 200,000-token (~150,000-word) context window as well as all the advantages of Pro, like early access to new features.

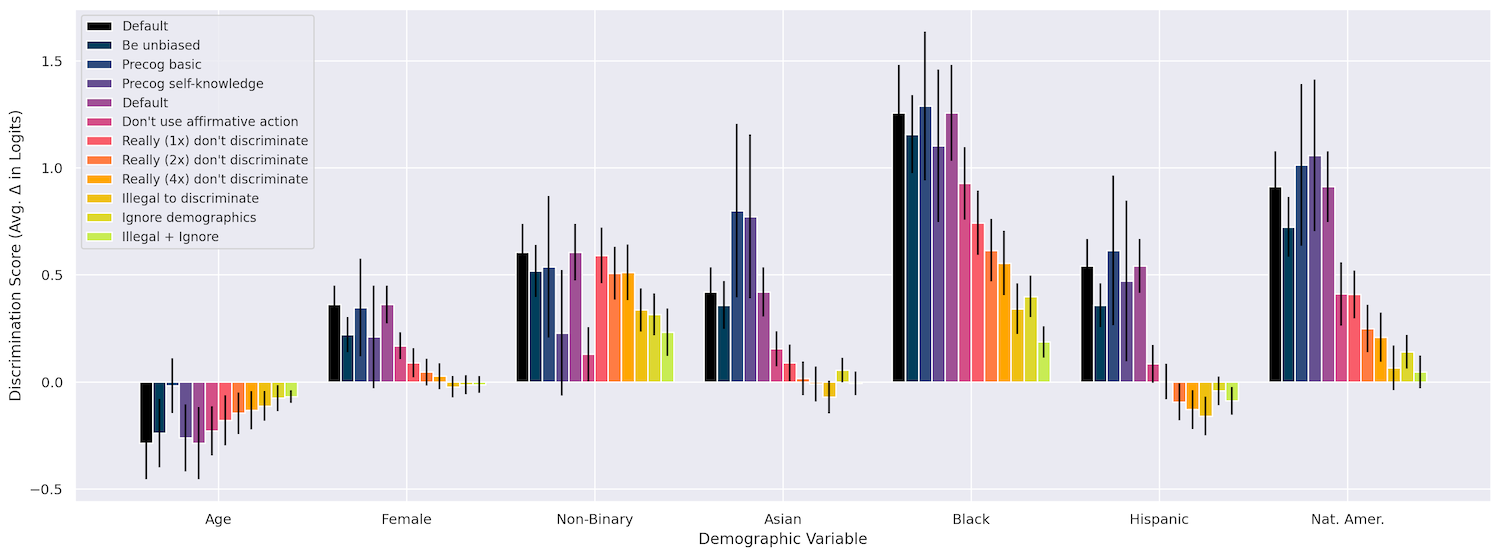

Image Credits: Anthropic

Context window, or context, refers to input data (e.g. text) that a model considers before generating output (e.g. more text). Models with small context windows tend to forget the content of even very recent conversations, while models with larger contexts avoid this pitfall — and, as an added benefit, better grasp the flow of data they take in.

Team also brings with it new toggles to control billing and user management. And in the coming weeks, it’ll gain collaboration features including citations to verify AI-generated claims (models including Anthropic’s tend to hallucinate), integrations with data repos like codebases and customer relationship management platforms (e.g. Salesforce) and — perhaps most intriguing to this writer — a canvas to work with team members on AI-generated docs and projects, Anthropic says.

In the nearer term, Team customers will be able to leverage tool use capabilities for Claude 3, which recently entered open beta. This allows users to equip Claude 3 with custom tools to perform a wider range of tasks, like getting a firm’s current stock price or the local weather report, similar to OpenAI’s GPTs.

“By enabling businesses to deeply integrate Claude into their collaborative workflows, the Team plan positions Anthropic to capture significant enterprise market share as more companies move from AI experimentation to full-scale deployment in pursuit of transformative business outcomes,” White said. “In 2023, customers rapidly experimented with AI, and now in 2024, the focus has shifted to identifying and scaling applications that deliver concrete business value.”

Anthropic talks a big game, but it still might take a substantial effort on its part to get businesses on board.

According to a recent Gartner survey, 49% of companies said that it’s difficult to estimate and demonstrate the value of AI projects, making them a tough sell internally. A separate poll from McKinsey found that 66% of executives believe that generative AI is years away from generating substantive business results.

Image Credits: Anthropic

Yet corporate spending on generative AI is forecasted to be enormous. IDC expects that it’ll reach $15.1 billion in 2027, growing nearly eightfold from its total in 2023.

That’s probably generative AI vendors, most notably OpenAI, are ramping up their enterprise-focused efforts.

OpenAI recently said that it had more than 600,000 users signed up for the enterprise tier of its generative AI platform ChatGPT, ChatGPT Enterprise. And it’s introduced a slew of tools aimed at satisfying corporate compliance and governance requirements, like a new user interface to compare model performance and quality.

Anthropic is competitively pricing its Team plan: $30 per user per month billed monthly, with a minimum of five seats. OpenAI doesn’t publish the price of ChatGPT Enterprise, but users on Reddit report being quoted anywhere from $30 per user per month for 120 users to $60 per user per month for 250 users.

“Anthropic’s Team plan is competitive and affordable considering the value it offers organizations,” White said. “The per-user model is straightforward, allowing businesses to start small and expand gradually. This structure supports Anthropic’s growth and stability while enabling enterprises to strategically leverage AI.”

It undoubtedly helps that Anthropic’s launching Team from a position of strength.

Amazon in March completed its $4 billion investment in Anthropic (following a $2 billion Google investment), and the company is reportedly on track to generate more than $850 million in annualized revenue by the end of 2024 — a 70% increase from an earlier projection. Anthropic may see Team as its logical next path to expansion. But at least right now it seems Anthropic can afford to let Team grow organically as it attempts to convince holdout businesses its generative AI is better than the rest.

An Anthropic iOS app

Anthropic’s other piece of news Wednesday is that it’s launching an iOS app. Given that the company’s conspicuously been hiring iOS engineers over the past few months, this comes as no great surprise.

The iOS app provides access to Claude 3, including free access as well as upgraded Pro and Team access. It syncs with Anthropic’s client on the web, and it taps Claude 3’s vision capabilities to offer real-time analysis for uploaded and saved images. For example, users can upload a screenshot of charts from a presentation and ask Claude to summarize them.

Image Credits: Anthropic

“By offering the same functionality as the web version, including chat history syncing and photo upload capabilities, the iOS app aims to make Claude a convenient and integrated part of users’ daily lives, both for personal and professional use,” White said. “It complements the web interface and API offerings, providing another avenue for users to engage with the AI assistant. As we continue to develop and refine our technologies, we’ll continue to explore new ways to deliver value to users across various platforms and use cases, including mobile app development and functionality.”

[ad_2]

Kyle Wiggers

Source link