[ad_1]

The Google Home system started as a simple wireless speaker that could take voice commands. However, it has become a robust system for automating your home. Controlled by the Google Home app, it allows you to ask questions, launch apps and create routines that control your home’s devices. The Google Home app is available for OS and Android devices.

Google Home app allows you to ask questions and launch apps.

(Cyberguy.com)

What do you need to get started with Google Home?

You’ll need a Google Home speaker device like this Google nest mini, a Google Home app, or a Google/Gmail account to use Google Home. The Google Home app will walk you through the setup, and you’ll be able to add other information, like your location, so you can get local weather or traffic updates. You’ll also want to connect your Google Home app with other apps like Spotify or Google Photos to increase the device’s functionality.

What kinds of things can I do with Google Home?

In short, Google Home is your virtual butler, creating a world of possibilities for users like you and me: Say basic voice commands to start a favorite playlist. Suppose you have a question about absolutely anything. In that case, you can ask Google Assistant rather than look it up on your phone. You can also create a routine that gives you the weather and traffic report at a specific time each morning.

Home security is another popular use with Google Home. When an exterior light or motion sensor is triggered, Google Home can turn on a smart bulb inside the house, creating the impression that someone has noticed a sound outside. You can also create routines that turn on interior lights on a schedule if you’re away.

Google Home app allows you to control your home’s devices.

(Cyberguy.com)

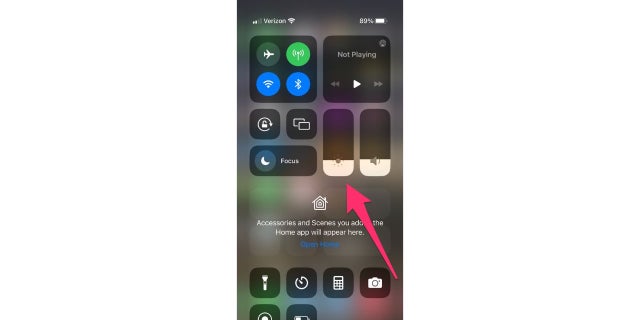

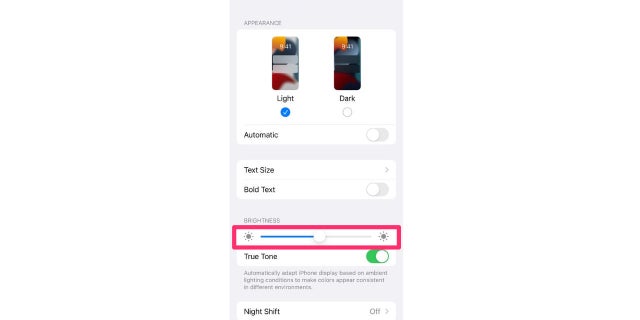

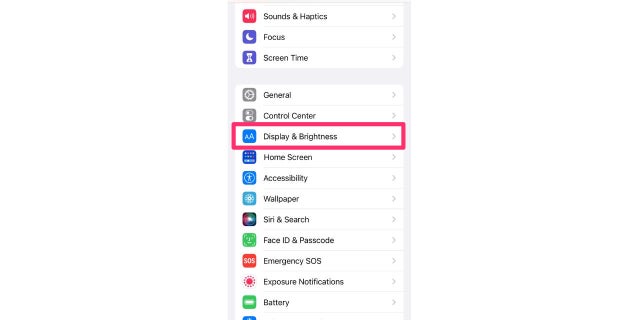

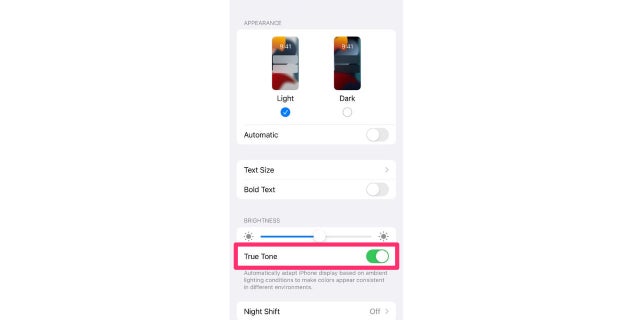

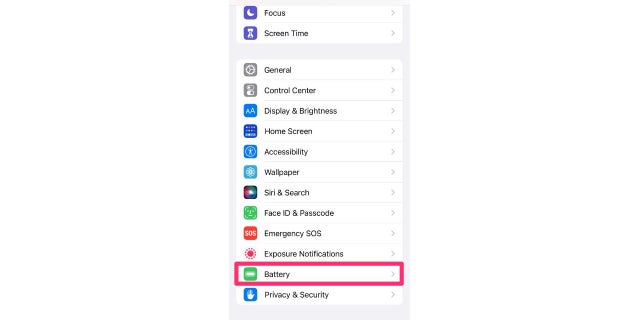

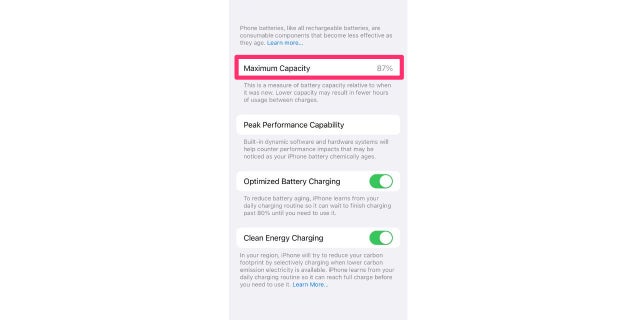

WHY DOES MY IPHONE SCREEN KEEP DIMMING BY ITSELF?

What types of devices work with Google Home?

There are hundreds of Google Home-enabled devices, with more coming on the market all the time:

- Smart plugs can allow users to control non-smart devices by providing or removing power. You can manage all of this through the Google Home app.

- Google Home can control robot vacuums.

- With smart Google Home-enabled doorbells or cameras, you can easily see who is at the door from anywhere in the house, your city or the world — essentially, wherever you have a connection.

- Smart thermostats and doorbells allow you to manually control your home’s heating and cooling cycles or to automate it entirely using geofencing so that when the house is empty, the heating dials back.

- Window and door locks can be locked or unlocked remotely, and cameras can record exterior and interior movement.

Getting alerts when a device joins your Google Home Group

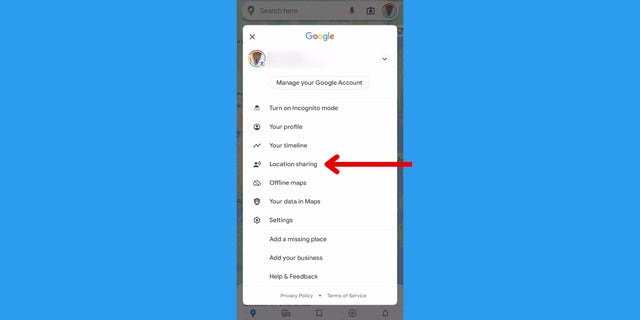

You should always be alerted when another device joins your Google Home Group, especially if you’re the only person in your household. Your Google Home Group consists of all the Google and Chromecast devices set up in your home, and you’ll always want to be aware and in control of them and not get any surprises. This way, you’ll always know if someone is trying to hack into your Google account or add another device without your consent. Here’s how to get alerts for your Google Home Group:

- Open your Google Home app.

- Go to Settings > General > Notifications.

- Toggle on People and devices.

Google Home app is available for iOS and Android devices.

(Cyberguy.com)

HOW GOOGLE MAPS LET LOVED ONES KNOW YOU’RE SAFE AT ALL TIMES

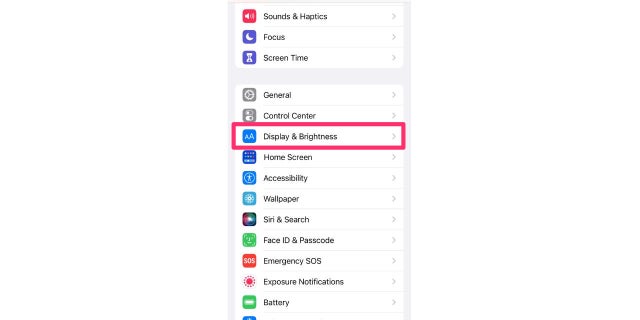

Privacy settings

Your privacy settings are one of the most essential features of your Google Home device. They control what devices are connected to it, private data and even web activity. You should double-check to see which actions you have specifically authorized, and switch off anything you don’t remember consenting to. Here’s how to update your Privacy Settings:

- Open the Google Home app.

- Tap on your personal icon in the top right-hand corner.

- Select You from the menu bar.

- Tap Your Data in the Assistant to see what information you have listed.

Deleting some or all of your private data

Google Assistant saves audio recordings of every voice command Google Home has ever heard, which helps the software to understand your voice and execute future commands better. However, it isn’t critical to the device’s operation. Here’s how to delete that and all other data:

- Go to the Your data on the Assistant page.

- Under Your Assistant activity, tap My Activity.

- To the right of the search bar at the top of the page, tap the icon of three stacked dots.

- Tap Delete activity by.

- If you want to start over with a clean slate, tap All time. Otherwise, you can choose to delete all data collected in the last hour or last day or create a custom range, say, from the day you started using Google Home until last month.

- The app will ask you to confirm that you want to delete your Google Assistant activity for the specified period. Tap Delete to confirm.

- You’ll see this message: “Deletion complete.” In the lower-right corner. Tap Got it to return to the main Google Assistant Activity page.

APPLE MESSAGES APP: 5 FEATURES TO REMEMBER

The most extreme privacy option is: Pausing all activity

You can also set Google Assistant to no longer keep logs of your data; however, that may cause some hiccups with how well Google Assistant functions. If your privacy is of the utmost importance to you, and you’re willing to deal with anything from a few glitches from time to time to an entirely non-functional Google Assistant, from the main Google Assistant Activity page:

- Scroll down to Web & App Activity is on, and tap Change setting.

- Turn off the toggle beside Web & App Activity.

A screen will pop up, warning you that “pausing Web & App Activity may limit or disable more personalized experiences across Google services.” At the bottom of that screen, press Pause to stop Google from logging your activity. Note that changing this setting does not delete your personal data from Google. It only prevents Google Assistant from recording more data going forward.

After you press Pause, you’ll be returned to the main Google Assistant Activity page.

The fun stuff – making calls

One of the coolest features of Google Home is that you can make calls without having to do any of the work. For this feature to work correctly, however, you must ensure that it is set up correctly. Here’s how to make sure that Google Home always displays your primary phone number when you request a call to be made:

- Open the Google Home app.

- Go to Settings.

- Under Google Assistant Services, tap Voice & Video Calls.

- Select Mobile Calling.

- If it’s not set up yet, select Your own number, and then add or change your phone number. Google will then send a verification code for you to enter on the next screen.

- Once your number is added, make sure Your own phone number is selected underneath Your linked services.

- Go to Contacts, and select Upload now to sync contacts from your phone.

Nest Learning Thermostat displaying Google logo in smart home in Lafayette, California, January 17, 2021.

(Photo by Smith Collection/Gado/Getty Images.)

Changing your nickname

A feature you can have the most fun with is having your Google Home device call you a nickname, which can be any name you want. Even if it’s something as silly as ‘Big Foot’ or ‘Mr. President,’ there’s a way for you to have your device call you anything you wish (and yes, cuss words are included).

- Open the Google Home app.

- Go to Settings.

- Scroll down, and select More settings.

- Under You, tap Nickname.

- Go to What should the Assistant call you? Type in the Nickname you wish to use.

- Tap Play to hear how Google Assistant says your name. If it says the name incorrectly, try spelling phonetically instead.

CLICK HERE TO GET THE FOX NEWS APP

Creating a speaker group

There’s nothing better than jamming to your favorite music. However, you can enhance your listening experience by doubling or even tripling your sound by grouping up your devices. By grouping multiple speakers, you can make a whole-house audio system and turn it into a real party.

- Open the Google Home app, and tap the + sign in the upper left corner.

- Tap Create Speaker Group, and select all the speakers you wish to add.

- Tap Next.

- Name the speaker group, and tap Save.

[ad_2]